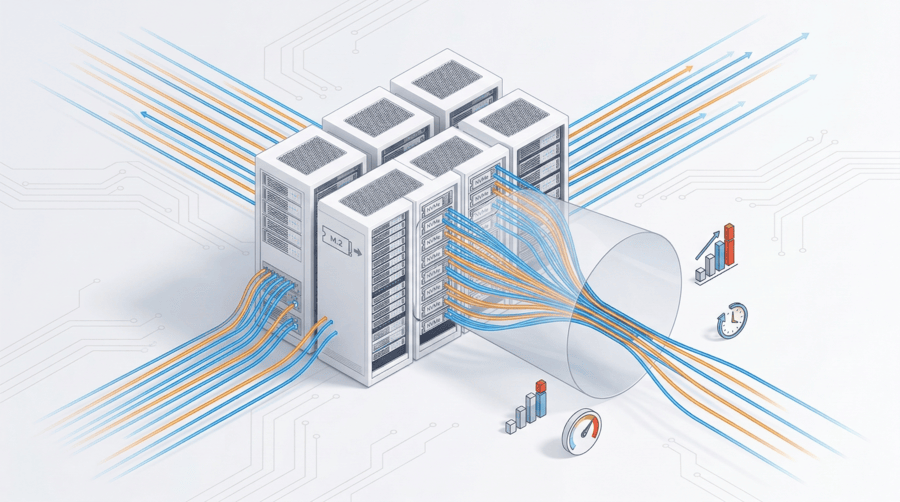

When disk latency begins climbing during peak traffic and applications start queuing read and write operations, the issue is rarely processing power. In production dedicated environments, the constraint typically surfaces at the storage layer. Transactions stall. Virtual machines pause. Databases take longer to commit. What looks like general system slowdown is often a storage throughput bottleneck shaping behavior beneath the surface.

In dedicated server deployments supporting ecommerce platforms, ERP systems, SaaS applications, financial databases, or AI workloads, storage architecture determines whether performance scales smoothly or collapses under concurrency.

Understanding server I O bottlenecks requires looking beyond raw disk capacity and focusing on throughput ceilings, latency consistency, RAID design, controller architecture, and storage media selection.

The Expanding Gap Between Compute and Storage

Modern CPUs deliver massive parallel processing power. Memory bandwidth continues to increase. PCIe lanes multiply. Yet disk performance improvements have historically moved at a slower pace.

This widening performance gap creates a structural imbalance. Applications generate I O requests faster than storage systems can complete them. As concurrency increases, latency rises disproportionately. Queue depth grows. Eventually users experience server slow read write speed even when CPU usage appears healthy.

The bottleneck is not about storage size. It is about how fast data can be delivered under sustained load.

Disk Performance Issues: Mechanical Limits and Controller Saturation

Traditional HDD based arrays remain constrained by mechanical seek time and rotational delay. Even enterprise grade SAS drives struggle under high random I O conditions.

Solid state drives remove mechanical latency, but they do not automatically eliminate disk performance issues. Storage controllers, firmware inefficiencies, cache limitations, and bus bottlenecks can all restrict throughput.

Common causes of storage constraints include:

- Controller saturation where all I O funnels through limited processing units

- Insufficient IOPS capacity relative to workload demand

- PCIe lane under allocation

- Write heavy database logging overwhelming parity calculations

- Virtualized environments blending sequential workloads into random access patterns

The virtualization I O blender effect is particularly relevant. When multiple VMs share storage, sequential requests are randomized, reducing the ability of controllers to optimize read ahead and write coalescing.

RAID Storage Performance: Balancing Protection and Speed

RAID configuration directly impacts throughput behavior.

RAID 5 and RAID 6 introduce parity overhead. Under heavy write workloads, parity calculation increases latency and can amplify bottlenecks. During rebuild operations, performance degradation becomes even more visible.

RAID 10 mirrors and stripes data, offering stronger write consistency and lower latency for transactional systems. While it requires additional drives, it typically delivers more predictable RAID storage performance in high concurrency environments.

Optimizing for usable capacity instead of I O behavior is a common architectural mistake. In production workloads, storage design must prioritize consistency under load.

NVMe vs SSD Performance: Interface Matters

Not all flash storage performs equally. The difference between NVMe vs SSD performance lies in interface and command architecture.

SATA SSDs operate within legacy interface constraints. NVMe leverages PCIe and supports deep parallel queues, significantly reducing latency and increasing sustained throughput.

For high frequency transactions, AI inference, containerized applications, and analytics workloads, NVMe storage ensures compute resources are not waiting on data delivery. When storage throughput cannot match processing speed, system performance plateaus regardless of CPU investment.

Network and Fabric Induced Bottlenecks

Dedicated server storage performance can also be influenced by network architecture. In SAN or distributed environments, insufficient uplink bandwidth, oversubscribed switching layers, or cross border routing inefficiencies can restrict effective I O.

In Asia Pacific deployments, latency between mainland China and international routes adds additional complexity. Packet retransmission and route congestion can indirectly increase storage wait times.

Dedicated infrastructure with optimized routing and controlled bandwidth allocation helps stabilize I O performance across regions.

Workload Alignment and I O Patterns

Different workloads produce different I O behaviors:

- OLTP databases generate small, random write intensive operations

- Media streaming and backup systems rely on large sequential throughput

- AI pipelines require sustained high bandwidth reads

- Logging systems create burst heavy write patterns

Mismatch between workload pattern and storage configuration is a primary cause of server I O bottlenecks. Scaling capacity without addressing access pattern alignment rarely resolves performance instability.

Rebuild Windows and Degraded State Risk

As disk sizes grow, RAID rebuild times increase. During rebuild, arrays operate in degraded mode, placing additional stress on remaining drives and reducing available throughput.

Long rebuild windows extend exposure and may intensify bottlenecks during peak activity. All flash NVMe arrays significantly shorten rebuild cycles due to parallelism and higher throughput, improving resilience and maintaining stability.

Monitoring and Diagnosing Bottlenecks

Effective diagnosis requires more than checking disk utilization percentages.

Key indicators include:

- Latency distribution rather than average throughput

- Queue depth trends

- IOPS relative to drive capability

- Controller CPU load

- Cache hit ratios

Tools such as iostat, vmstat, and advanced monitoring platforms provide insight into whether storage or another subsystem is constraining performance.

Baseline measurement is essential before architectural adjustments are made.

Preventing Storage Throughput Bottlenecks

Mitigation requires structural decisions:

- Align RAID type with workload behavior

- Deploy NVMe for latency sensitive systems

- Separate production and backup workloads

- Ensure adequate PCIe and bus bandwidth

- Scale network fabric proportionally with storage capability

- Avoid shared storage contention in high concurrency environments

Storage must scale in proportion to compute. Increasing CPU cores in a storage constrained system only accelerates I O pressure.

Dedicated Infrastructure and Hardware Isolation

Multi tenant environments introduce unpredictable I O contention. Neighbor workloads may consume controller resources or saturate shared arrays.

Single tenant dedicated infrastructure ensures consistent storage performance and removes noisy neighbor interference.

Dataplugs deploys NVMe SSD all flash dedicated servers within secure Tier III data center facilities across Asia Pacific. By combining hardware isolation with high throughput flash architecture and optimized connectivity, storage and network layers operate within predictable performance boundaries.

This approach supports financial platforms, blockchain nodes, SaaS applications, and ecommerce systems that require stable latency under sustained concurrency.

Conclusion

Storage throughput bottlenecks stem from architectural imbalance between compute power and I O capability. Controller design, RAID selection, storage interface, workload pattern, and network topology all influence dedicated server storage performance.

Capacity alone does not guarantee speed. Throughput consistency under load defines real performance.

For organizations seeking NVMe based single tenant infrastructure engineered to minimize server I O bottlenecks, Dataplugs provides dedicated environments optimized for stability and scalability. Connect with the team via live chat or at sales@dataplugs.com to discuss infrastructure aligned with your performance objectives.