AI writing tools make the writing part faster, but writing was never the hard part.

The hard part in content marketing is the information—ideas, verified facts, and reference material. And that’s exactly where these tools fall short.

I learned this after generating 40 articles through Claude. I’d tried the writing tools first, but they just couldn’t handle the part that actually matters. And by “AI writing tools” I mean the platforms built on top of LLMs—Jasper, Frase, Writesonic, that category. What I used instead was the LLM directly, with my own files and process around it.

In this article, I’m sharing the five problems I ran into and how I handle them now.

I’m not naming the specific tools I tested. They’re not bad products. If you don’t have strong writing or SEO skills, or you don’t have time for a more hands-on process, they’re a fine choice. That content is better than no content. But if you have the skills and want to push quality, they become the ceiling, not the floor.

Claude skills, if/when you decide to use automated content workflows.

Tip: For the most important steps, I ran my prompts twice, or ran the same check through a second AI to catch anything the first one missed.

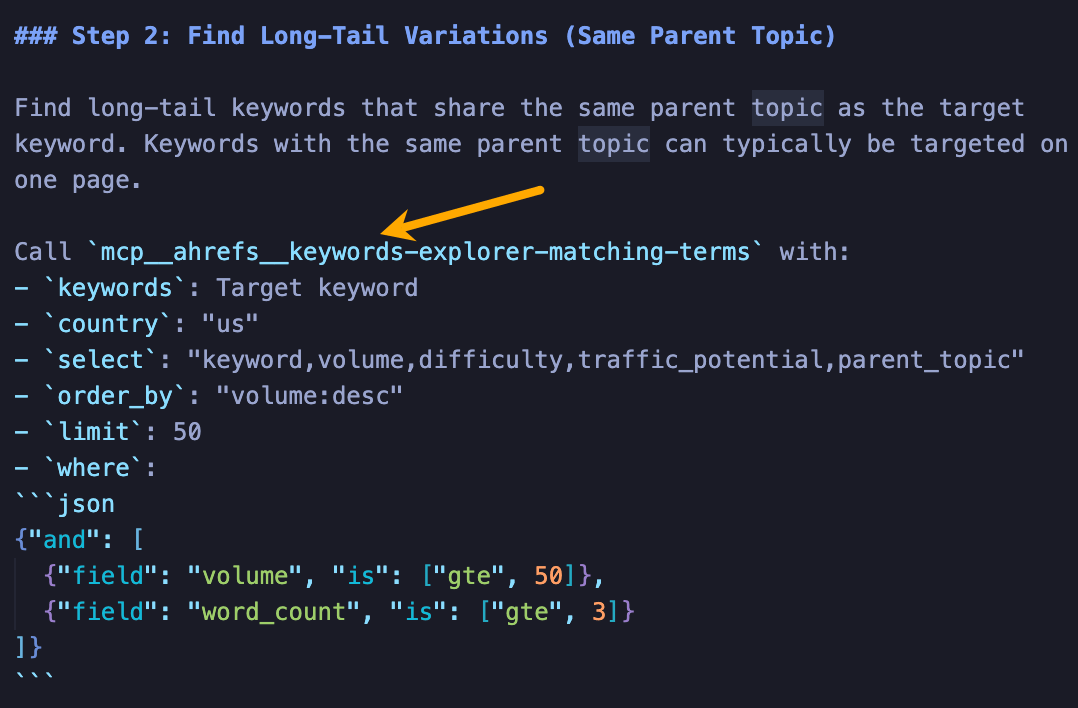

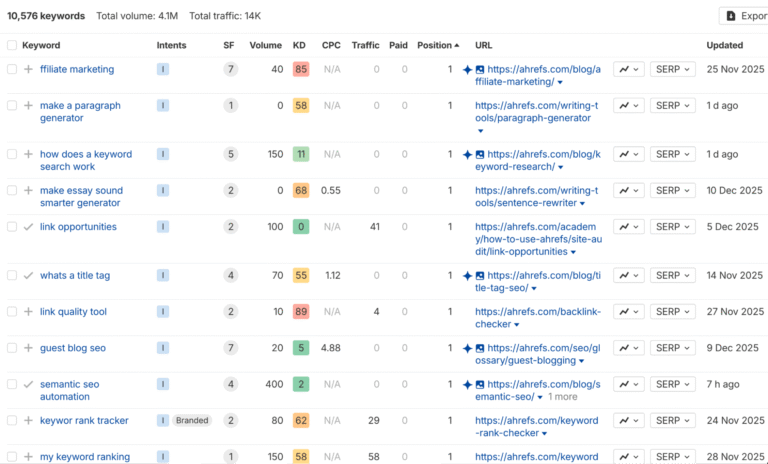

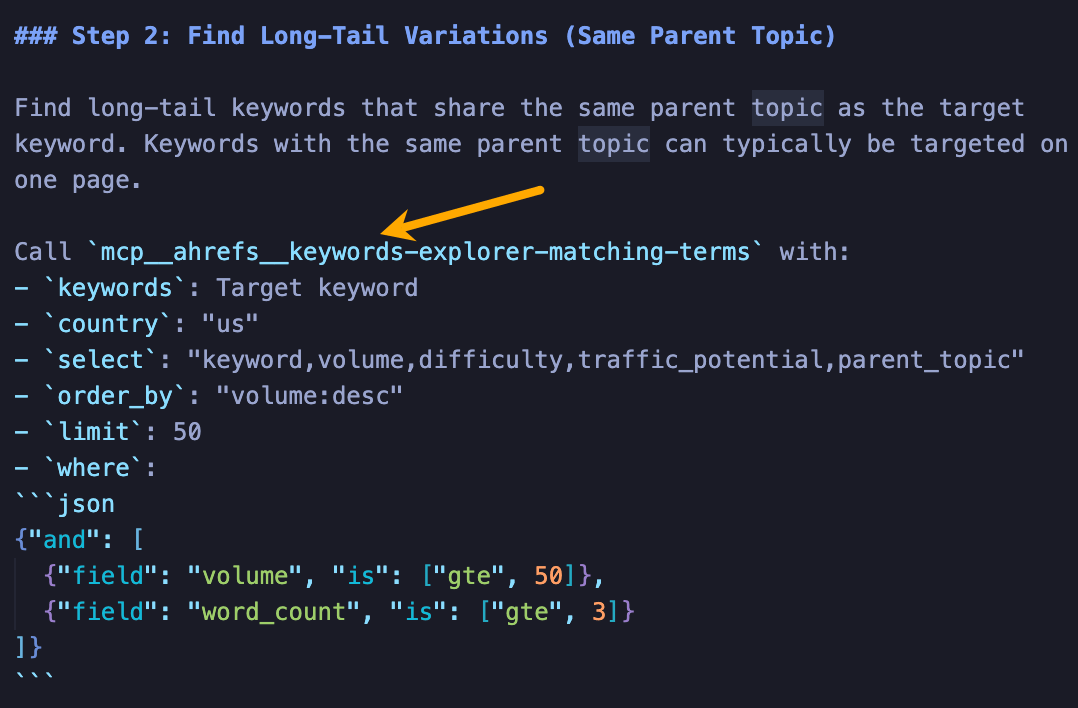

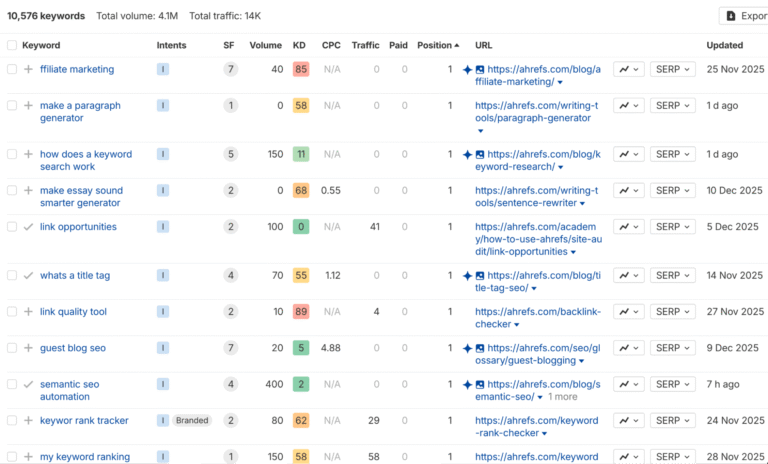

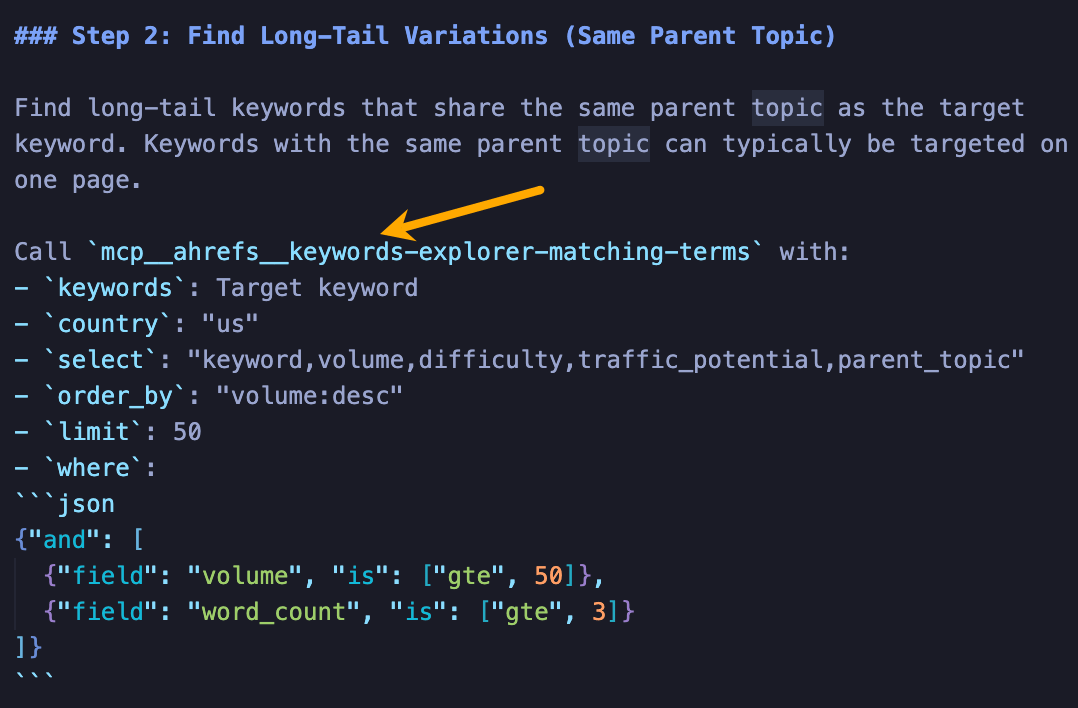

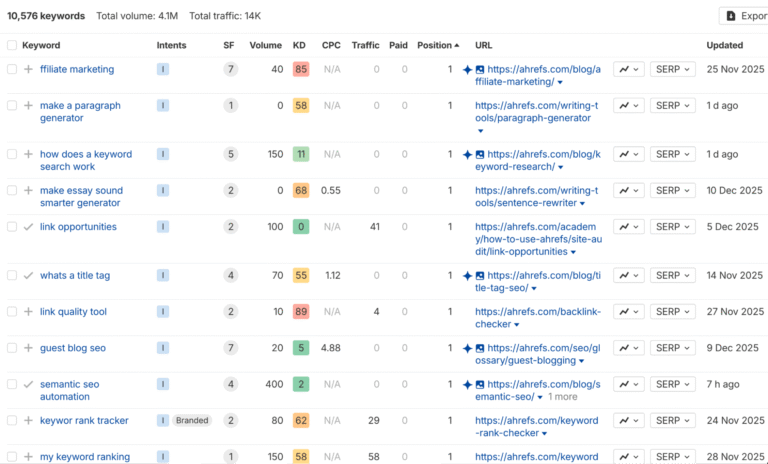

MCP integrations like Ahrefs’ let you pipe real data directly into these workflows—we’re experimenting with a full Claude Code pipeline where SEO research happens automatically. If your tool doesn’t support MCP yet, pull the data manually. Even screenshots work, as long as you give the AI specific data to work on.

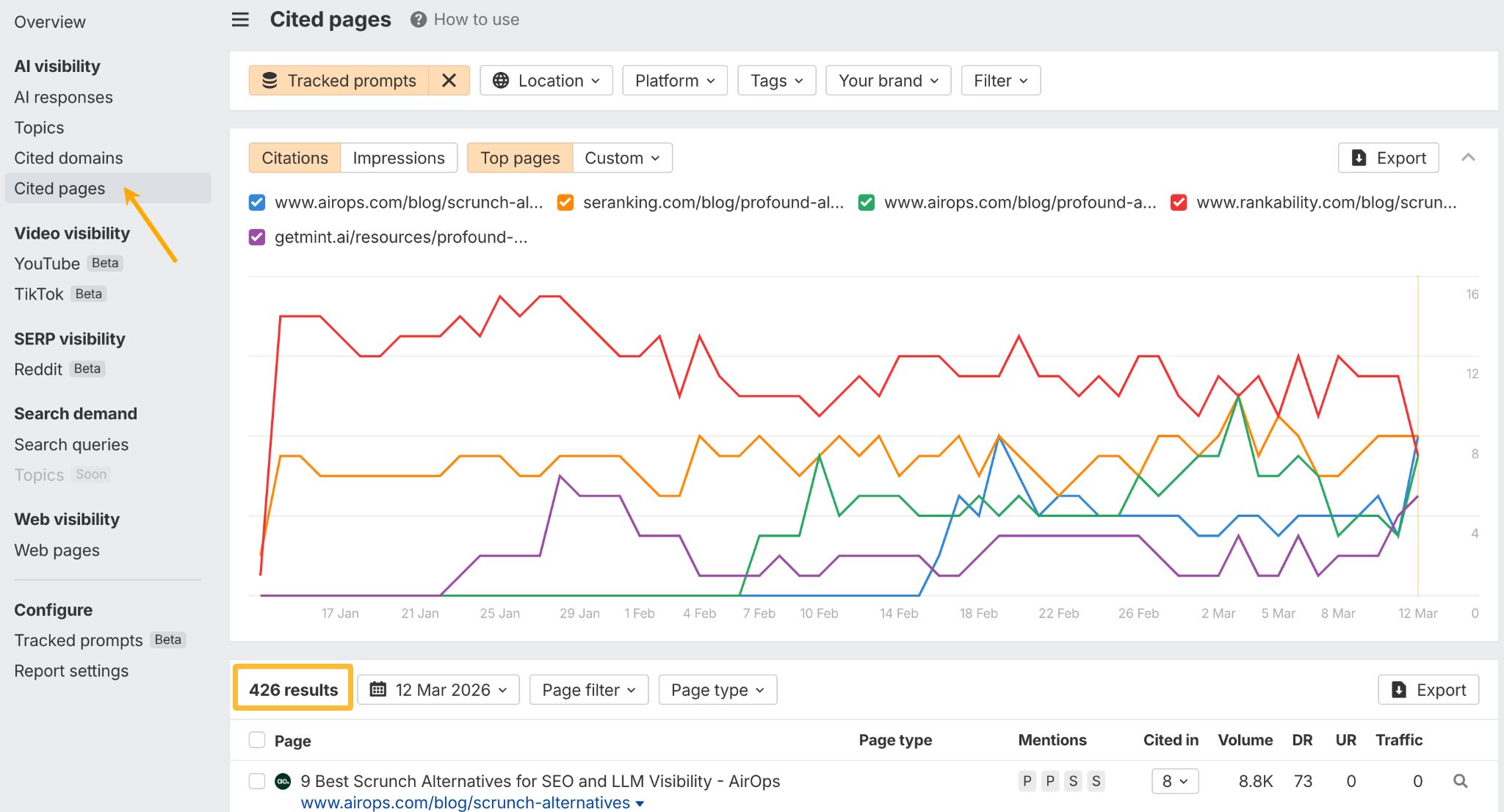

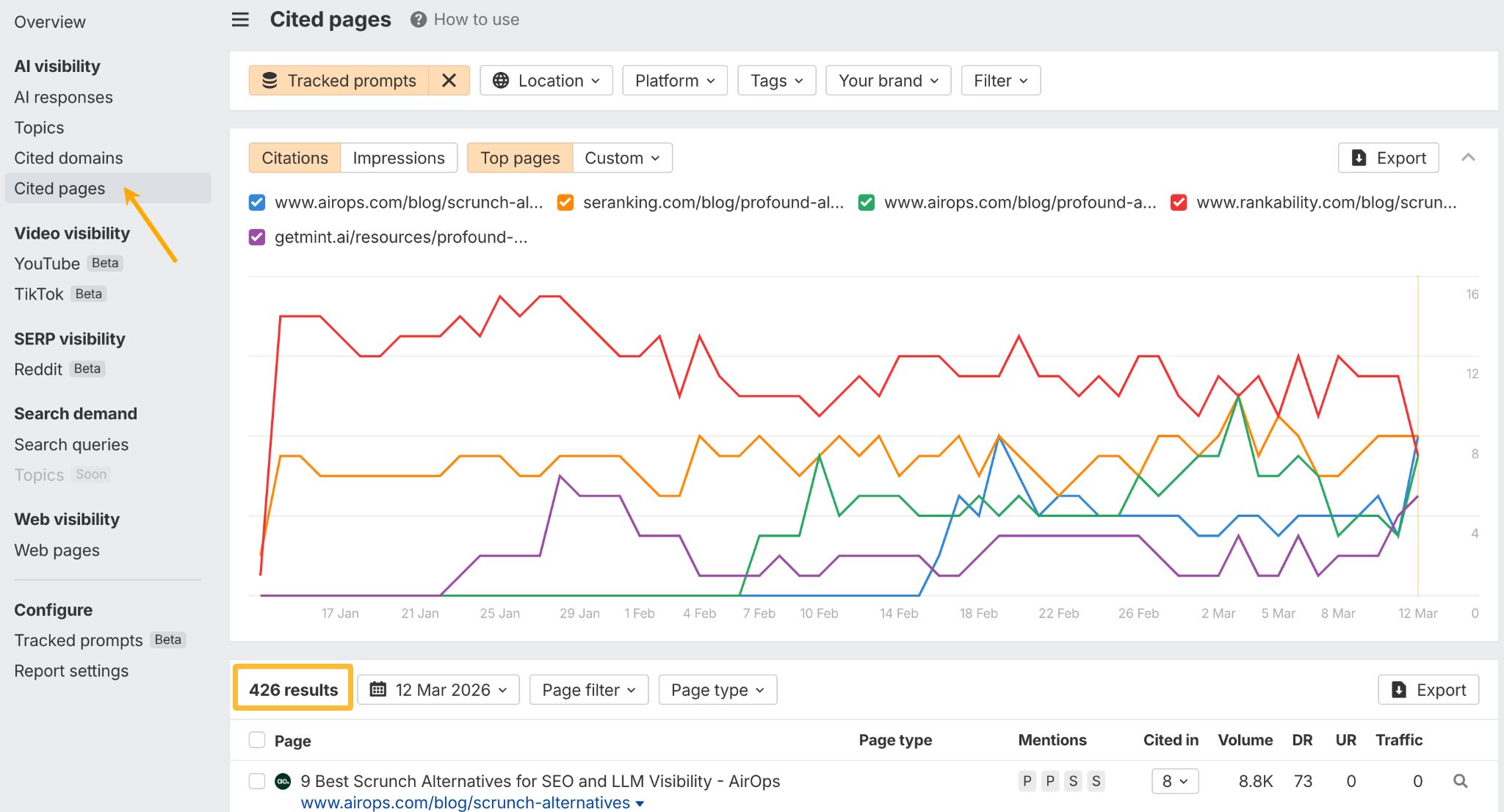

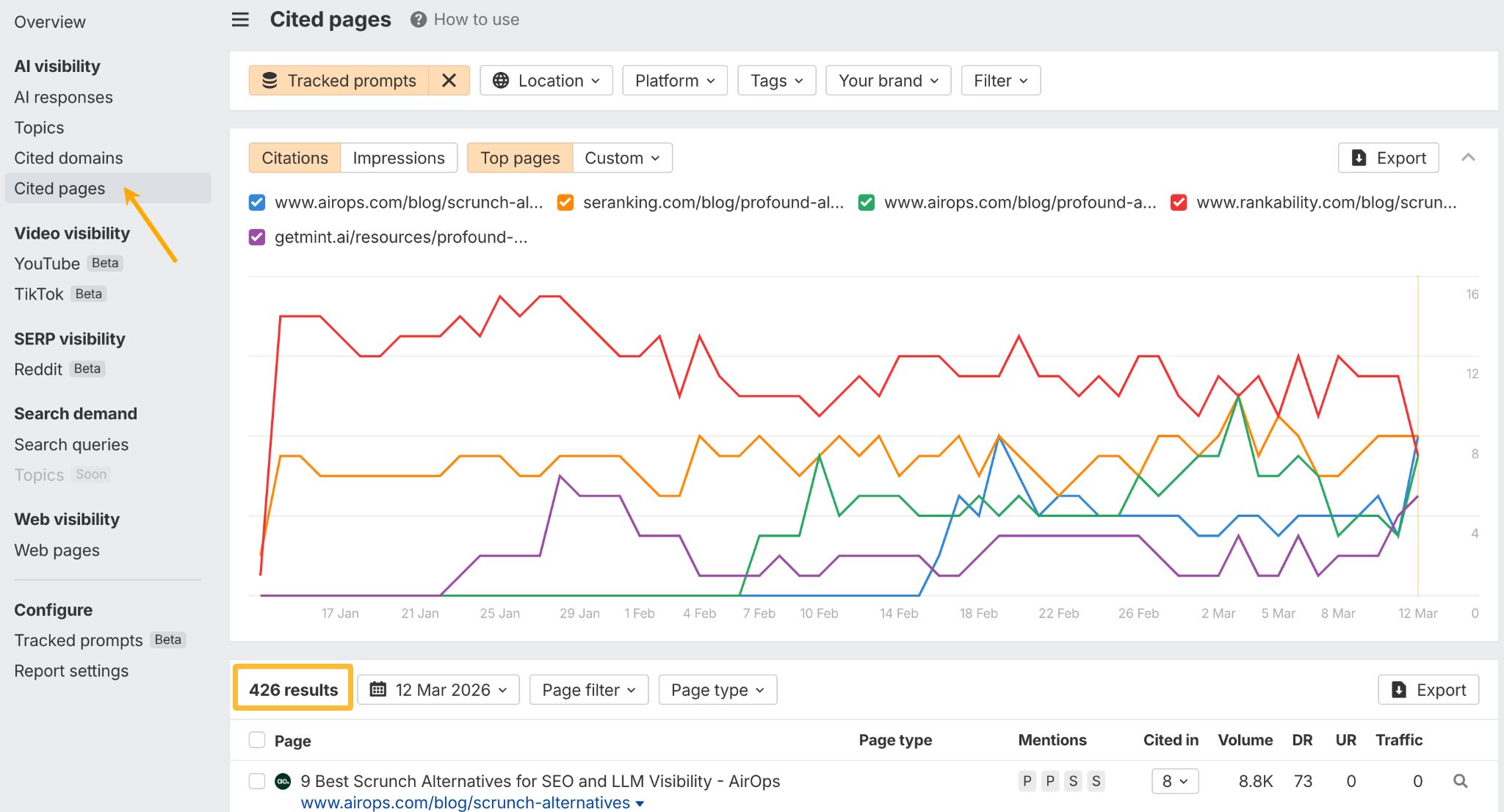

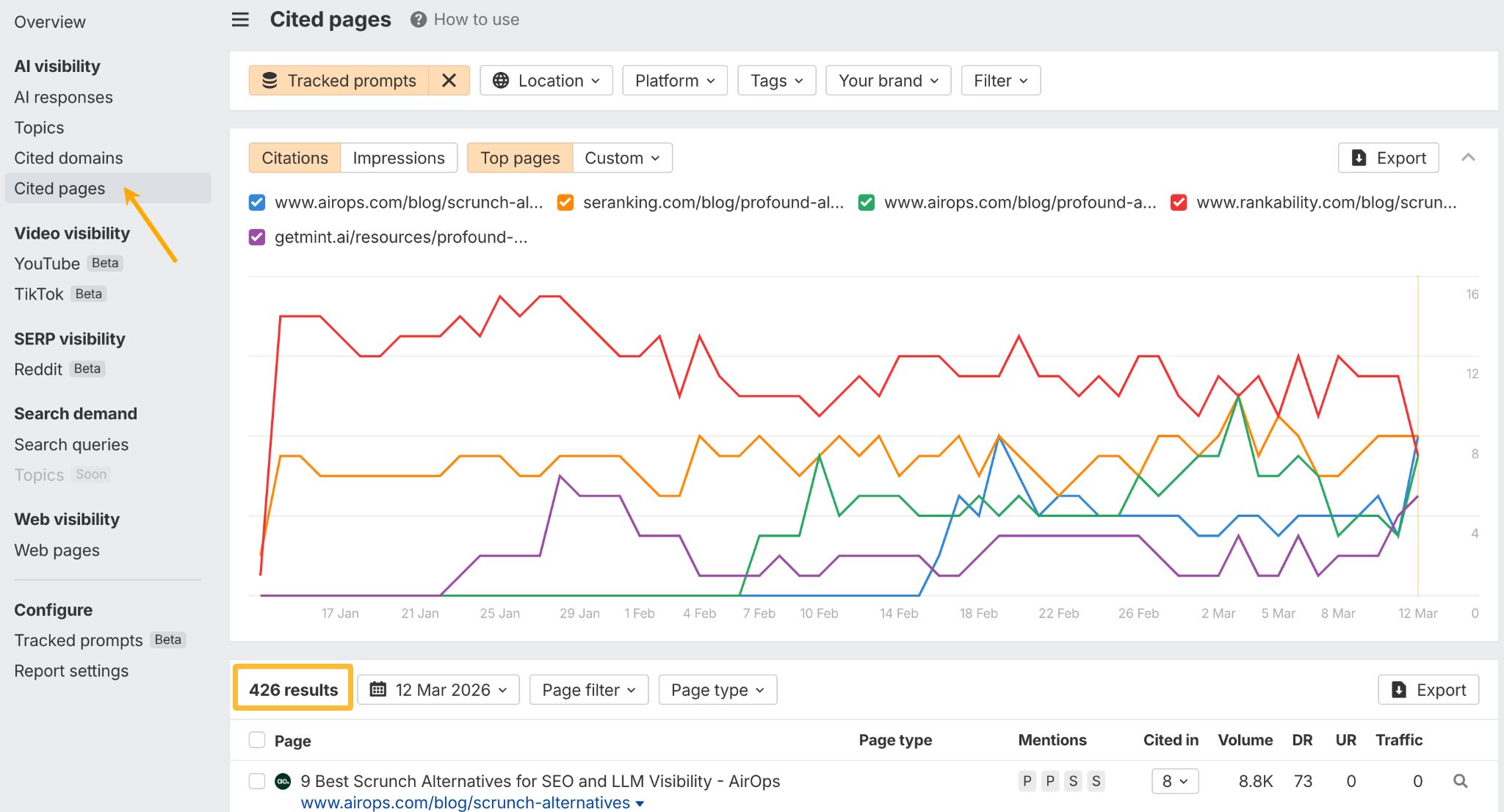

Ahrefs’ Brand Radar), then had Claude go through these pages to extract the structure and use that as an outline template for content generation. Then I asked it to weave in my own ideas. Research, structure, writing—all in one conversation, controlling every stage.

But maybe I’m wrong. Maybe a writing tool with everything on board is more your style. I’ll leave it to you to decide what makes more sense economically. I don’t want to tell you what to do with your money, but I know that for my needs, I’m never going back to AI writing tools.

There’s also something a bit self-defeating about the AI tool ecosystem. Every time an LLM provider releases a better model, many of the tools built on top of it lose part of their reason to exist.

My solution: invest more in what you feed the AI

Redirect time and money toward:

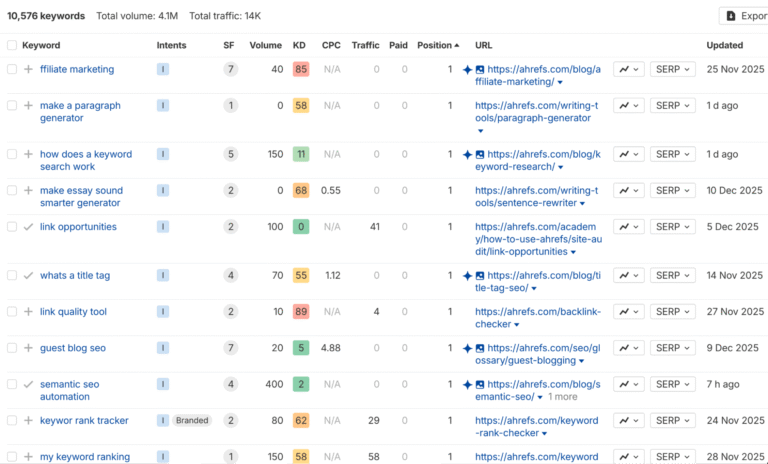

- Research tools that go deep. Rich keyword data, search intent analysis, competitive gaps, AI-preferred content formats, etc. Writing tools bolt on a surface-level version of this. Dedicated platforms have years of infrastructure behind them (here’s ours).

- Your editorial system. Prompt libraries, fact-checking workflows, style enforcement, Claude or Codex skills. The stuff that keeps your judgment in the loop at every stage. Same principle as the reference files: invest in the inputs.

This setup also makes it easier to adapt when models change or your content needs shift. It’ll click after the next section.

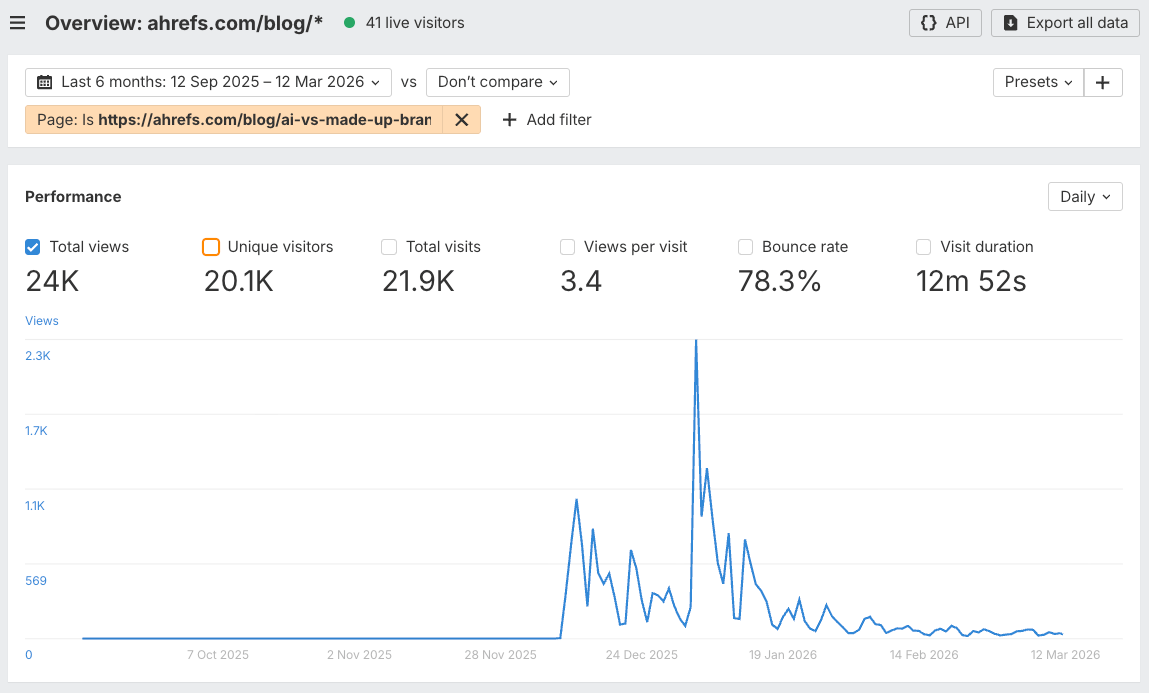

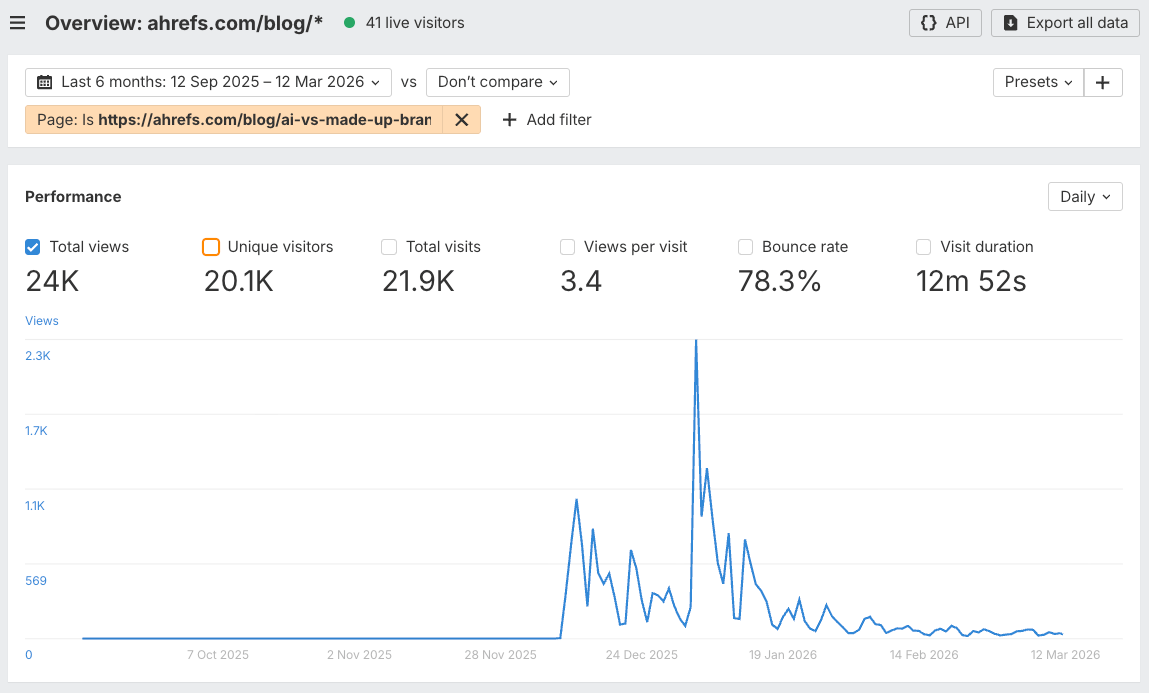

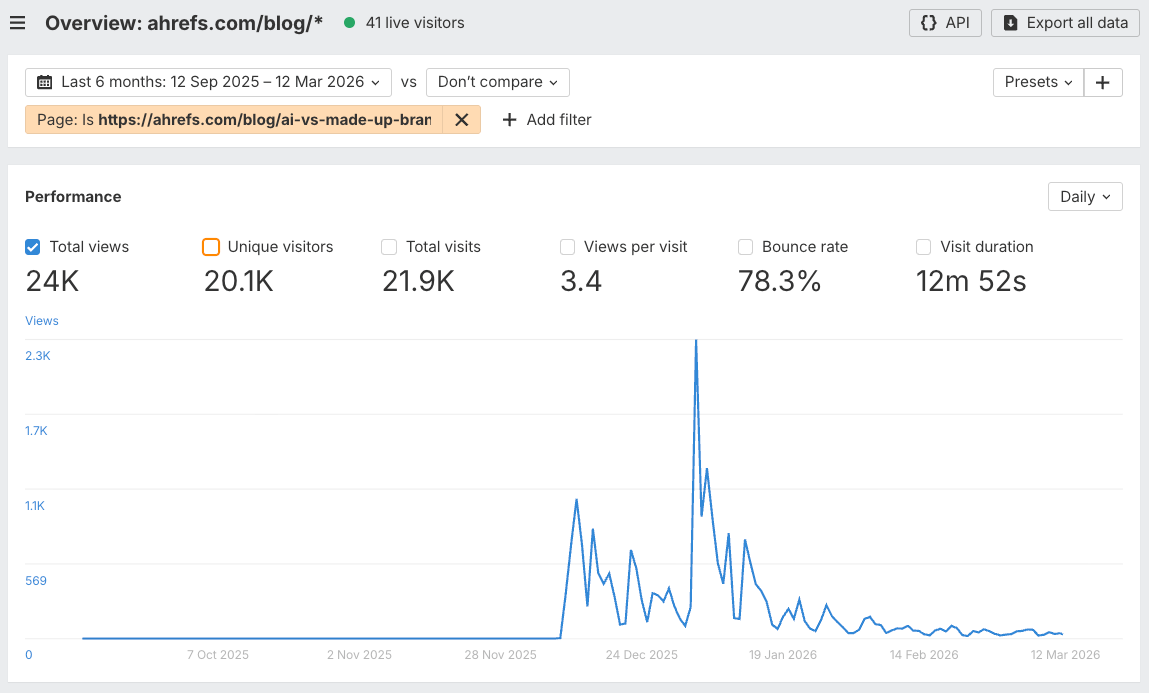

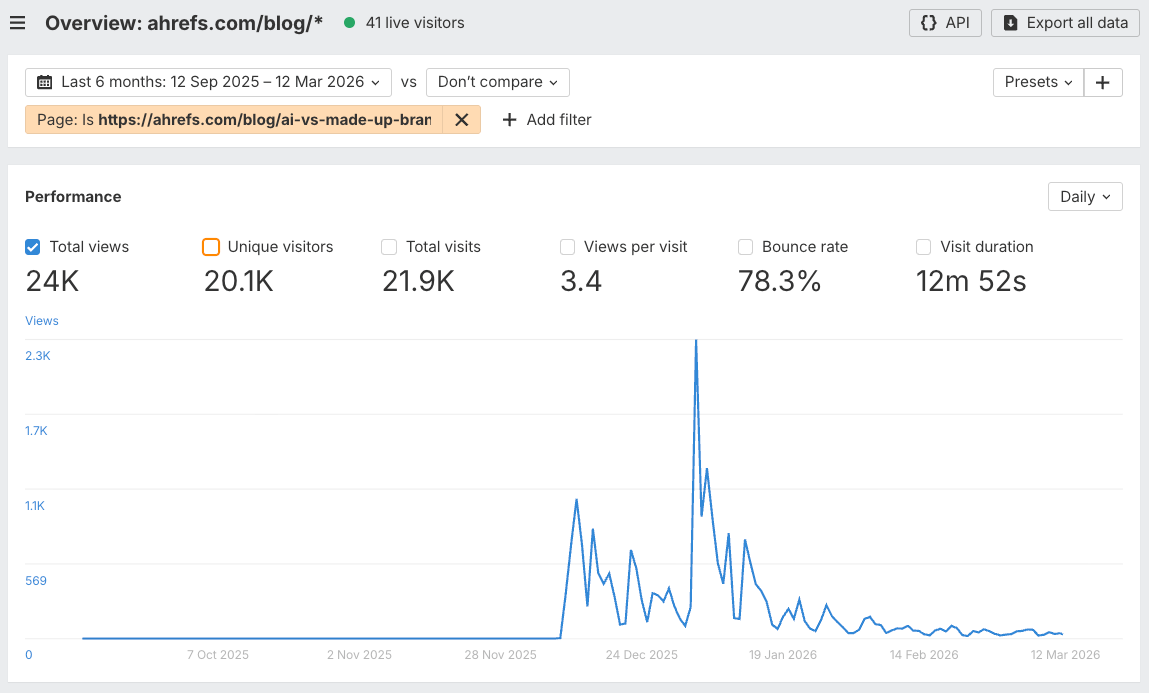

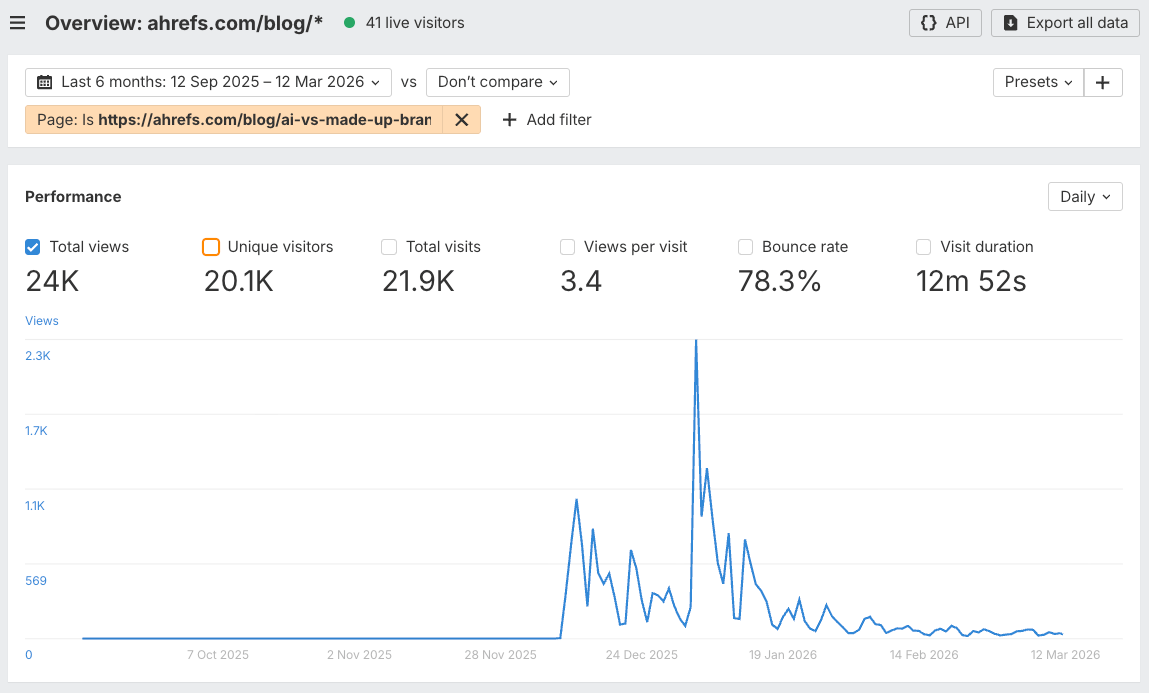

My AI misinformation experiment is an example: it ranked for nothing, but drove 24k visits and more social traction than I could count.

My solution: choose flexibility over convenience

Both content tracks need different approaches, and AI chatbots are the only tools flexible enough to handle both. So what you need is a process for creating documentation that you can easily share with AI.

For searchable content, audit your product documentation and help content. If an AI model can’t answer a basic question about your product using your own content, that’s a gap someone else will fill, accidentally or deliberately.

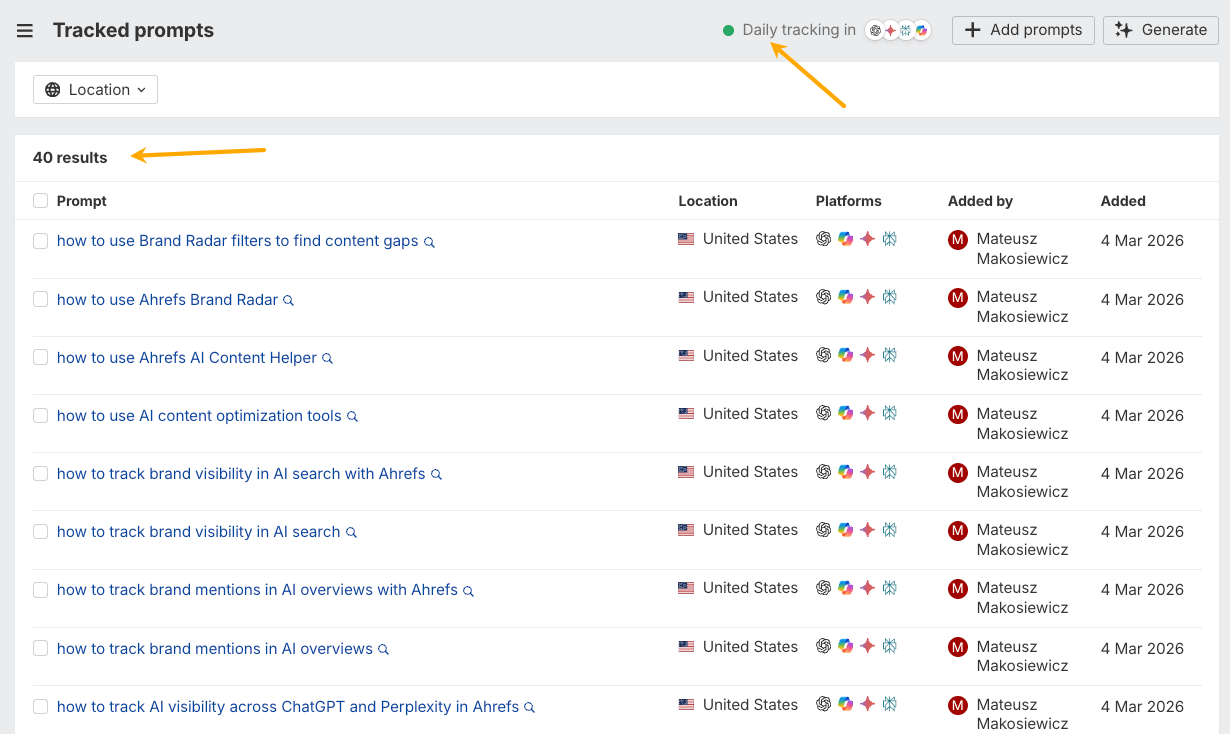

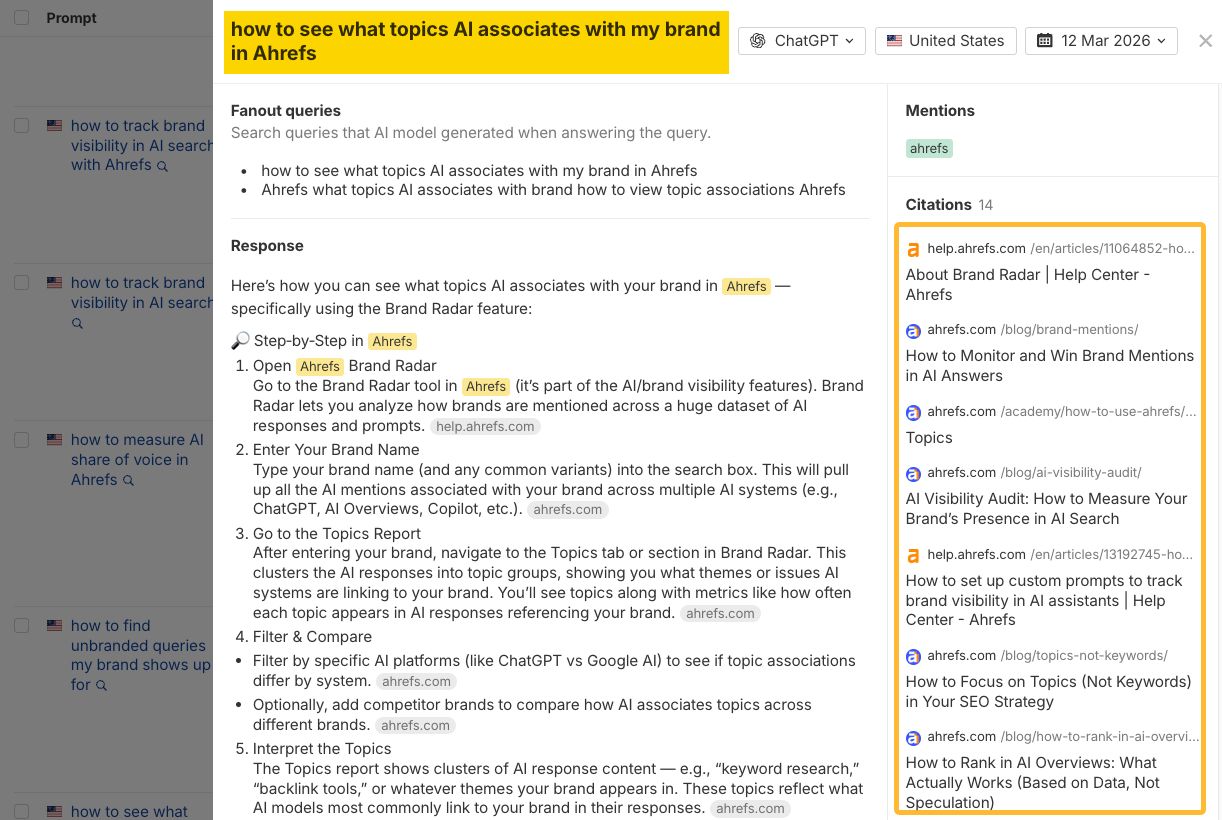

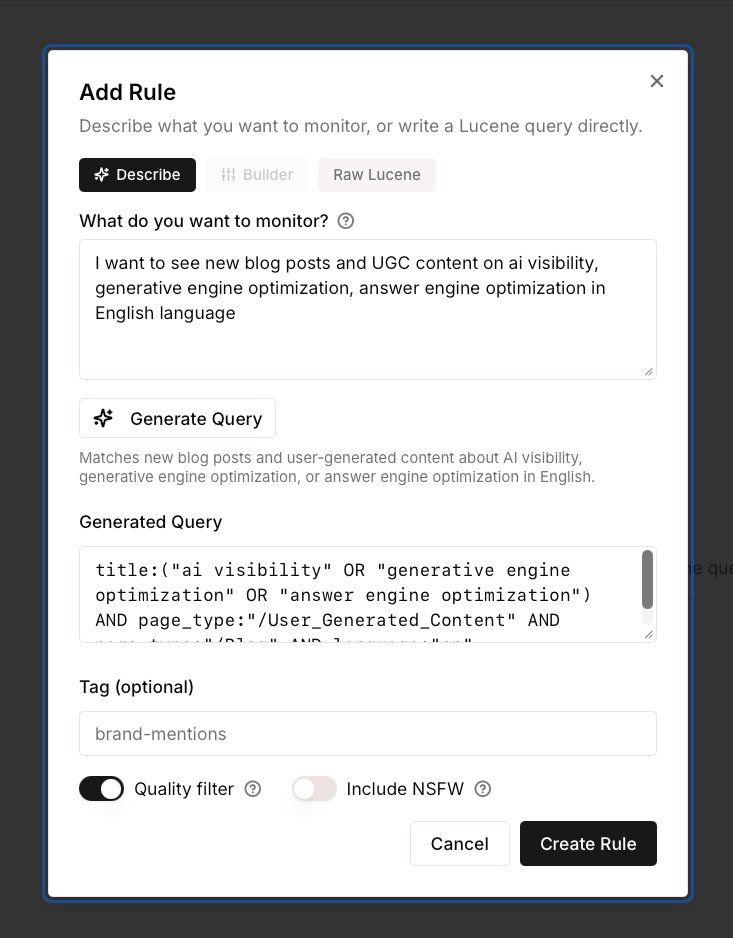

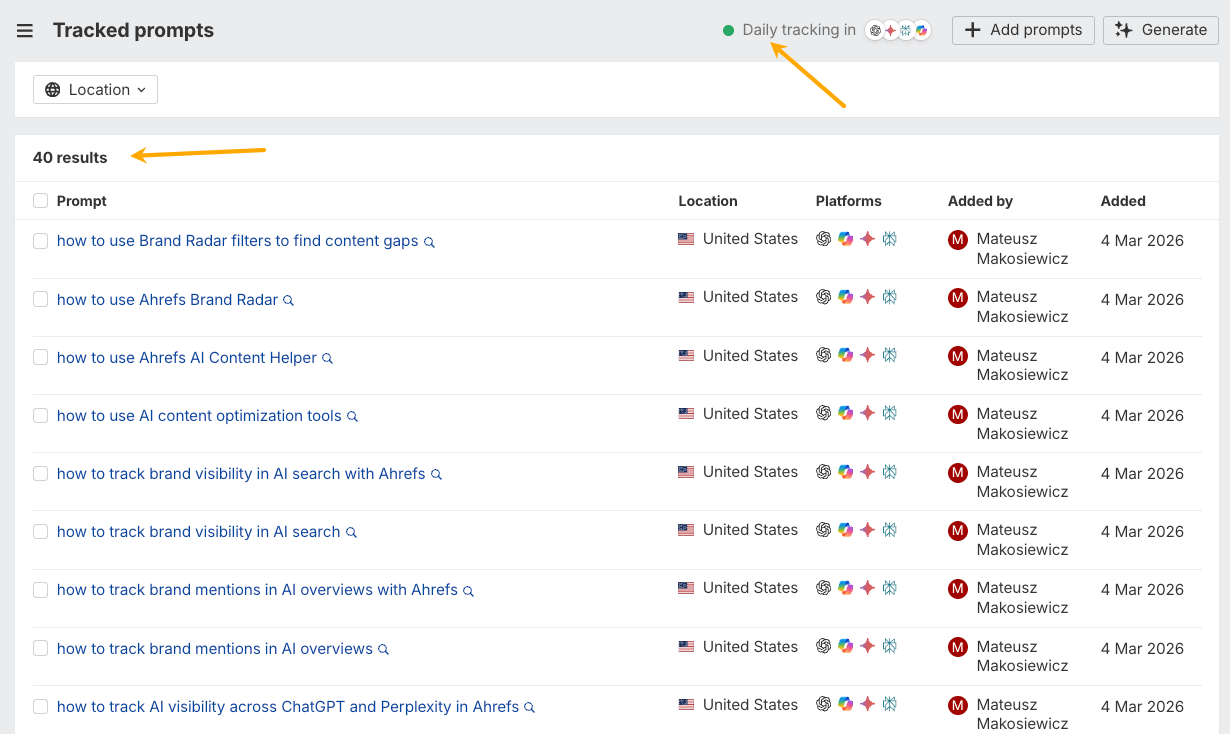

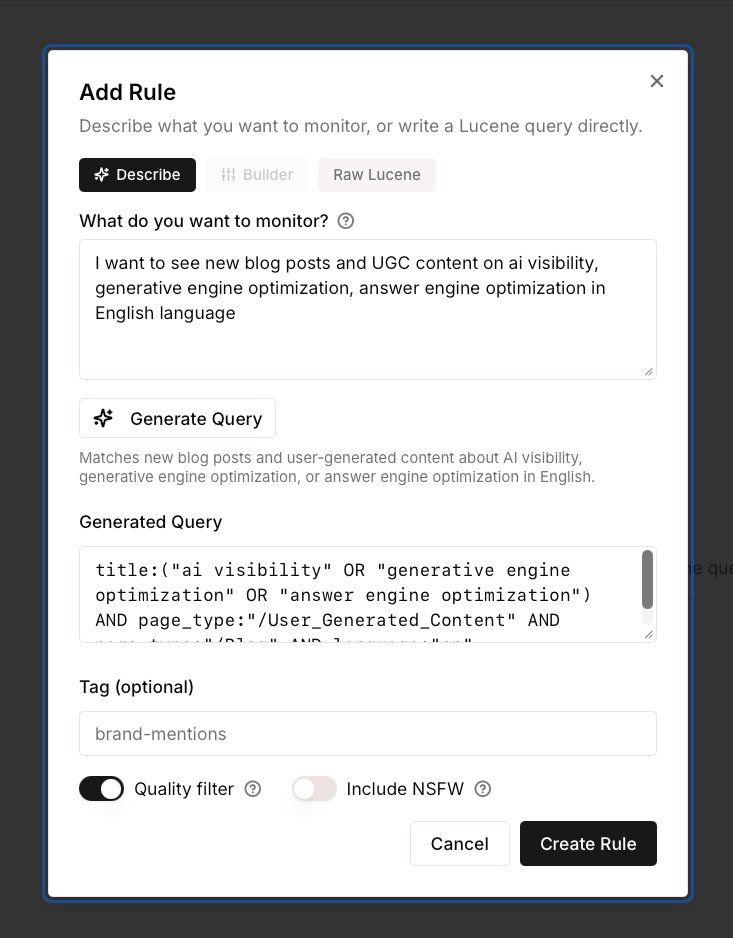

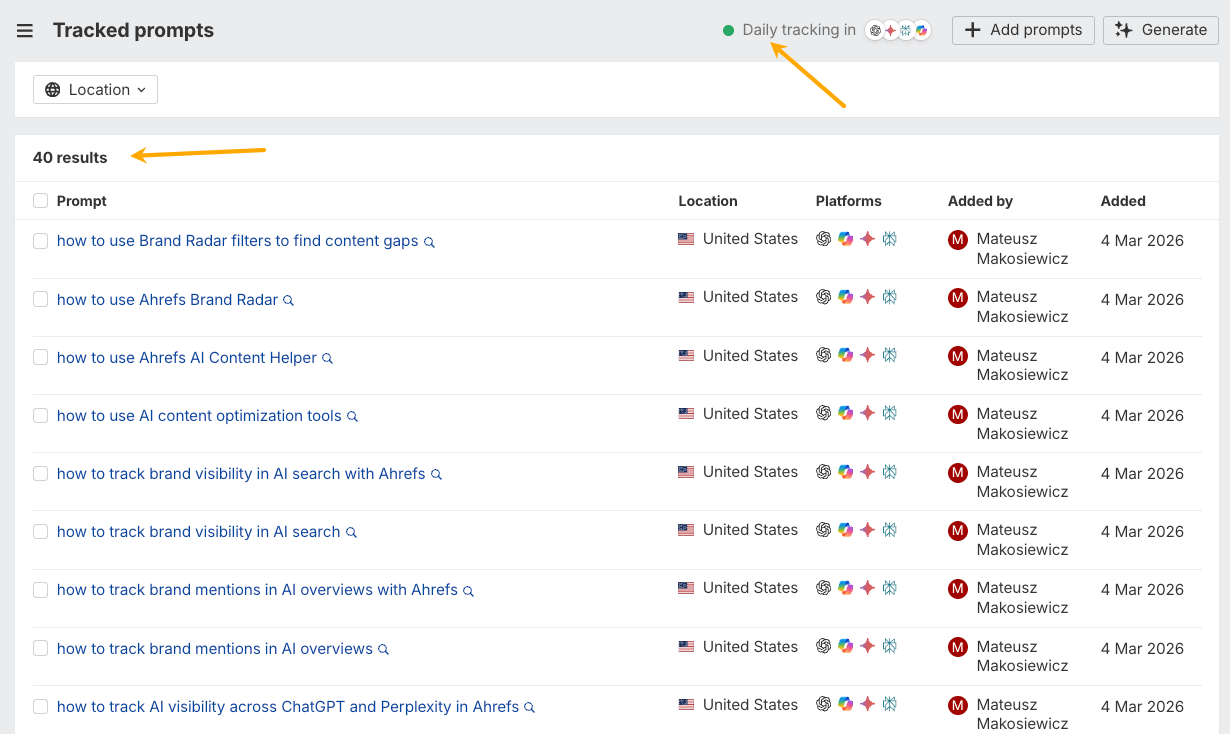

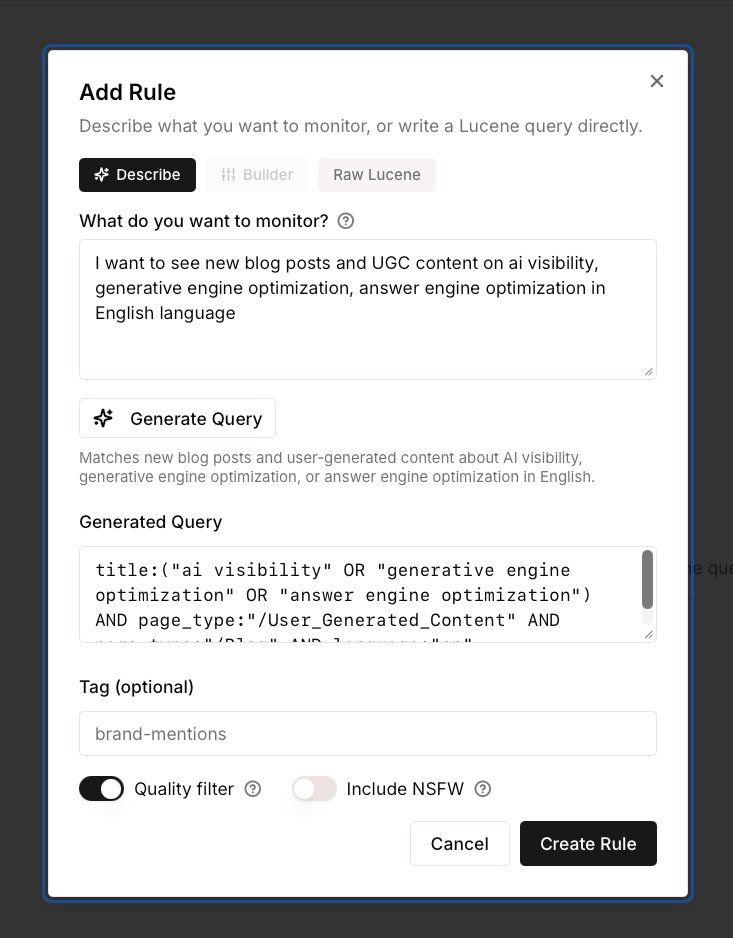

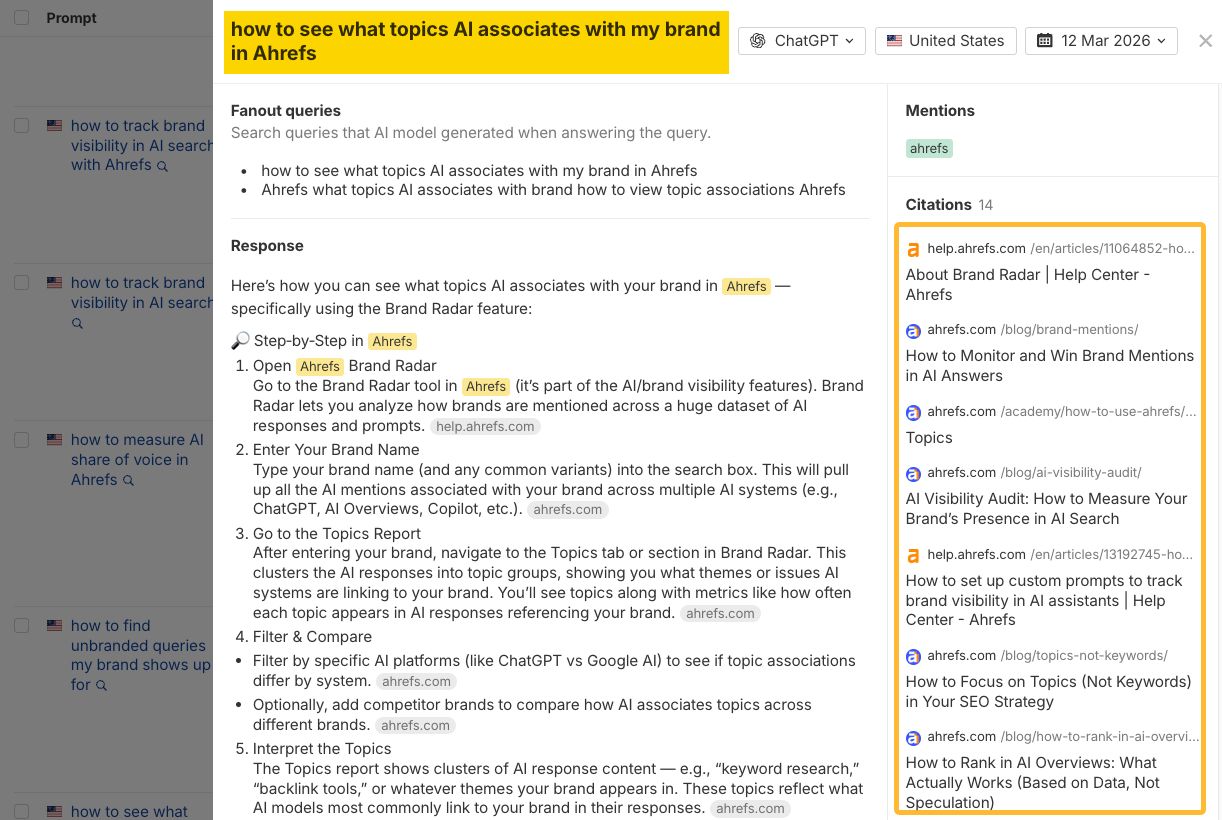

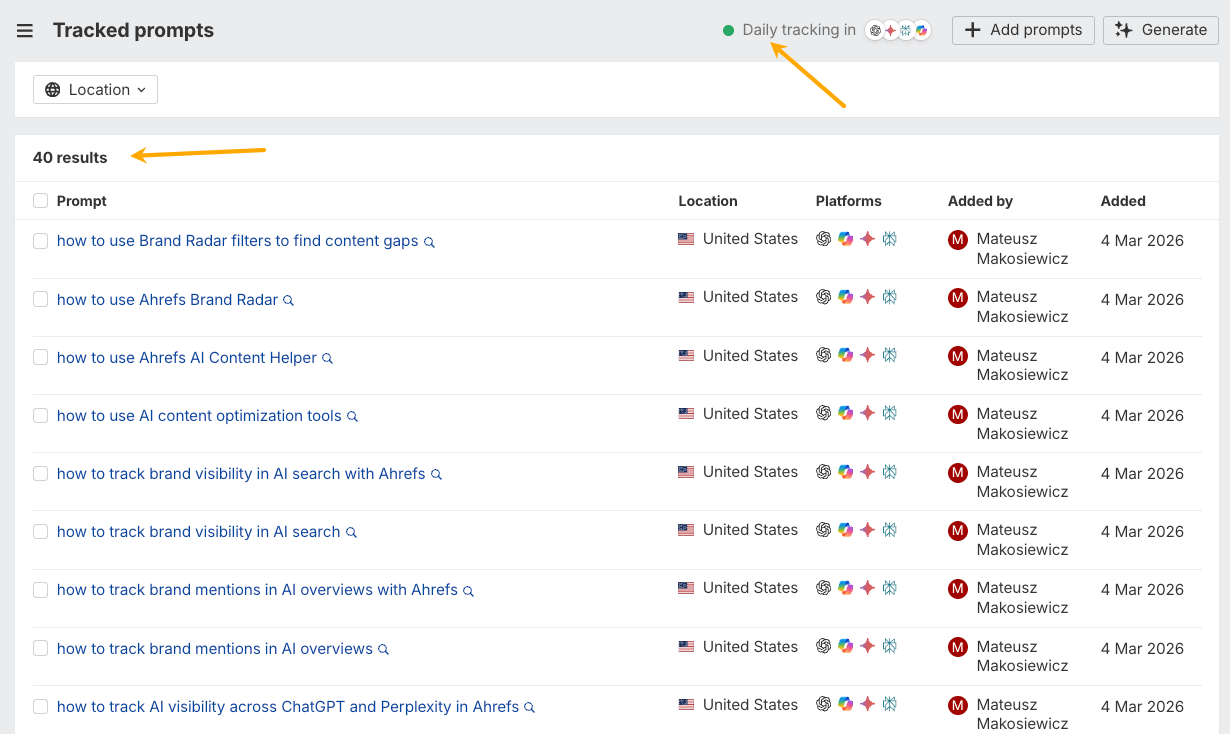

You can chat with the most popular AI assistants to spot holes, or set up tracking in a tool like Ahrefs Brand Radar to do it at scale.

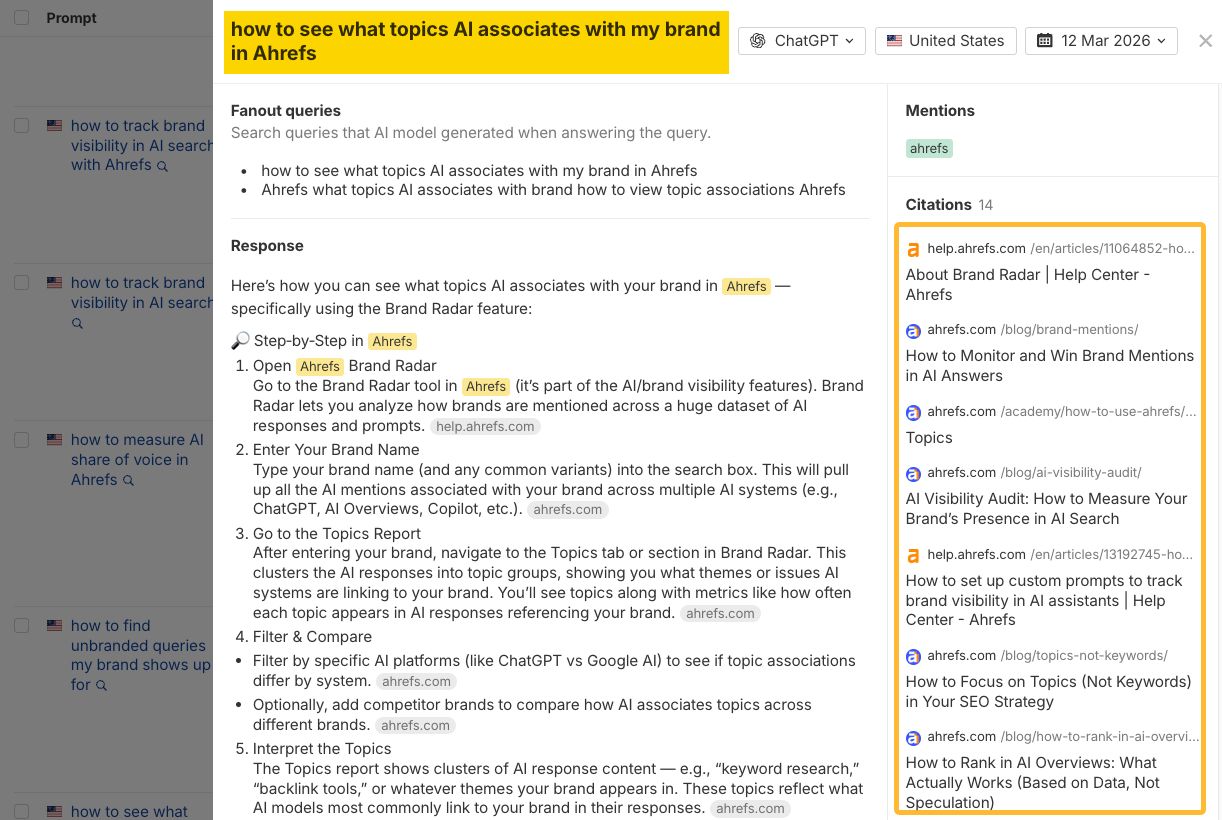

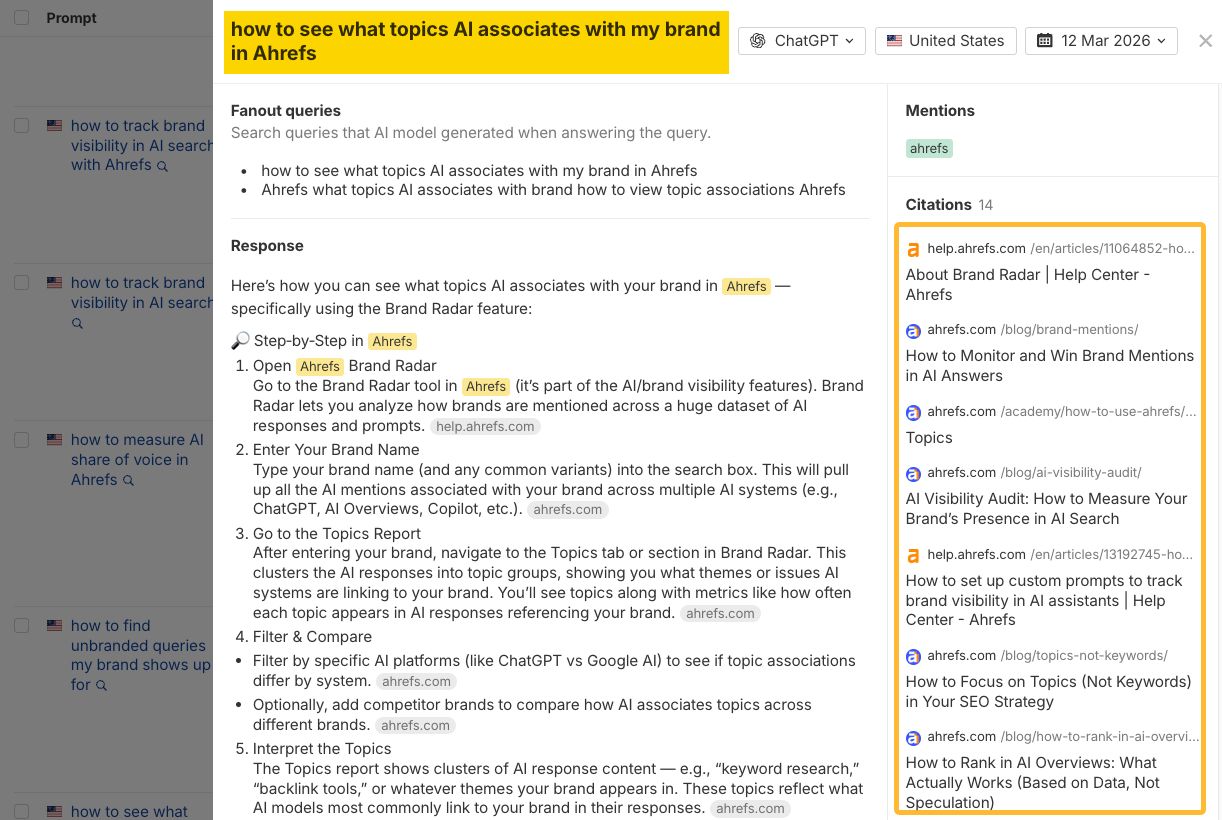

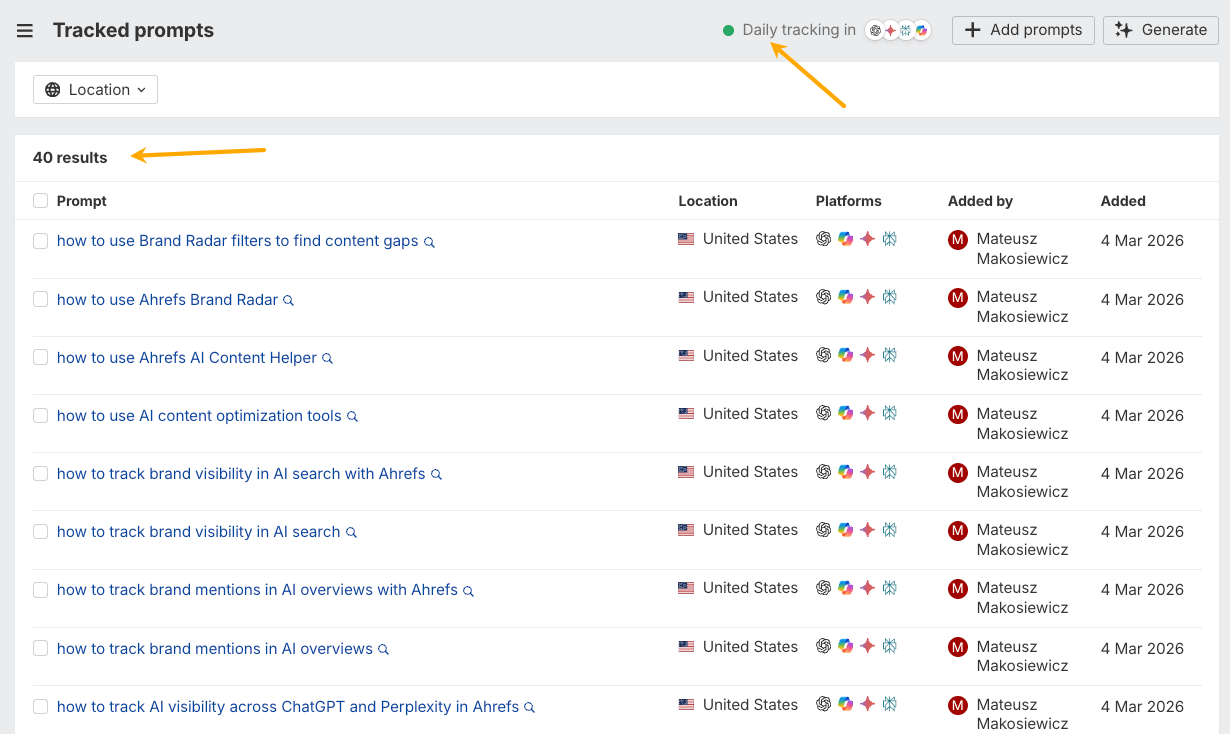

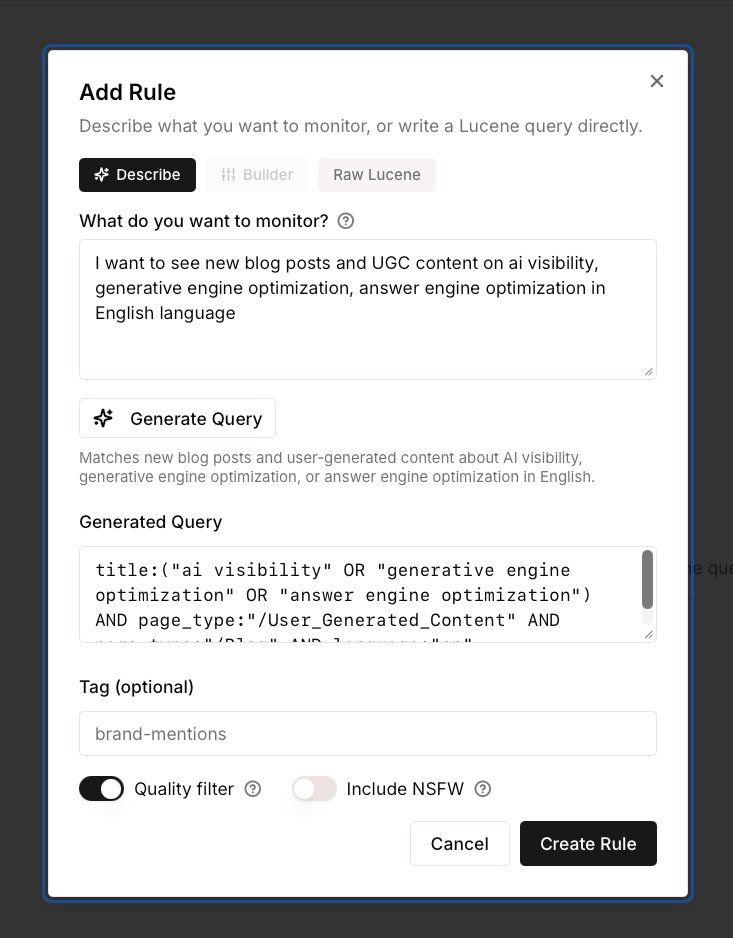

Adding custom prompts in Brand Radar.

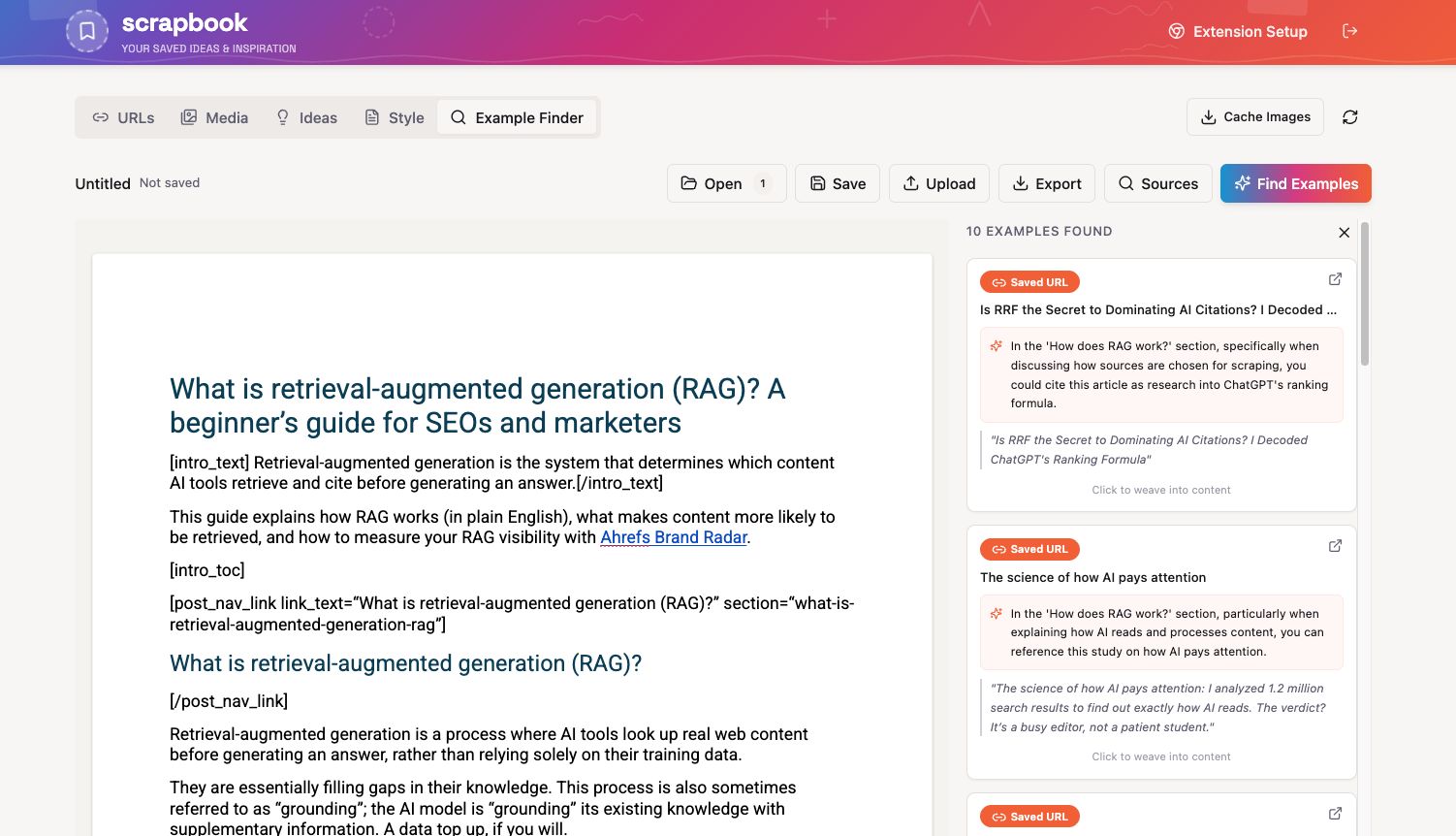

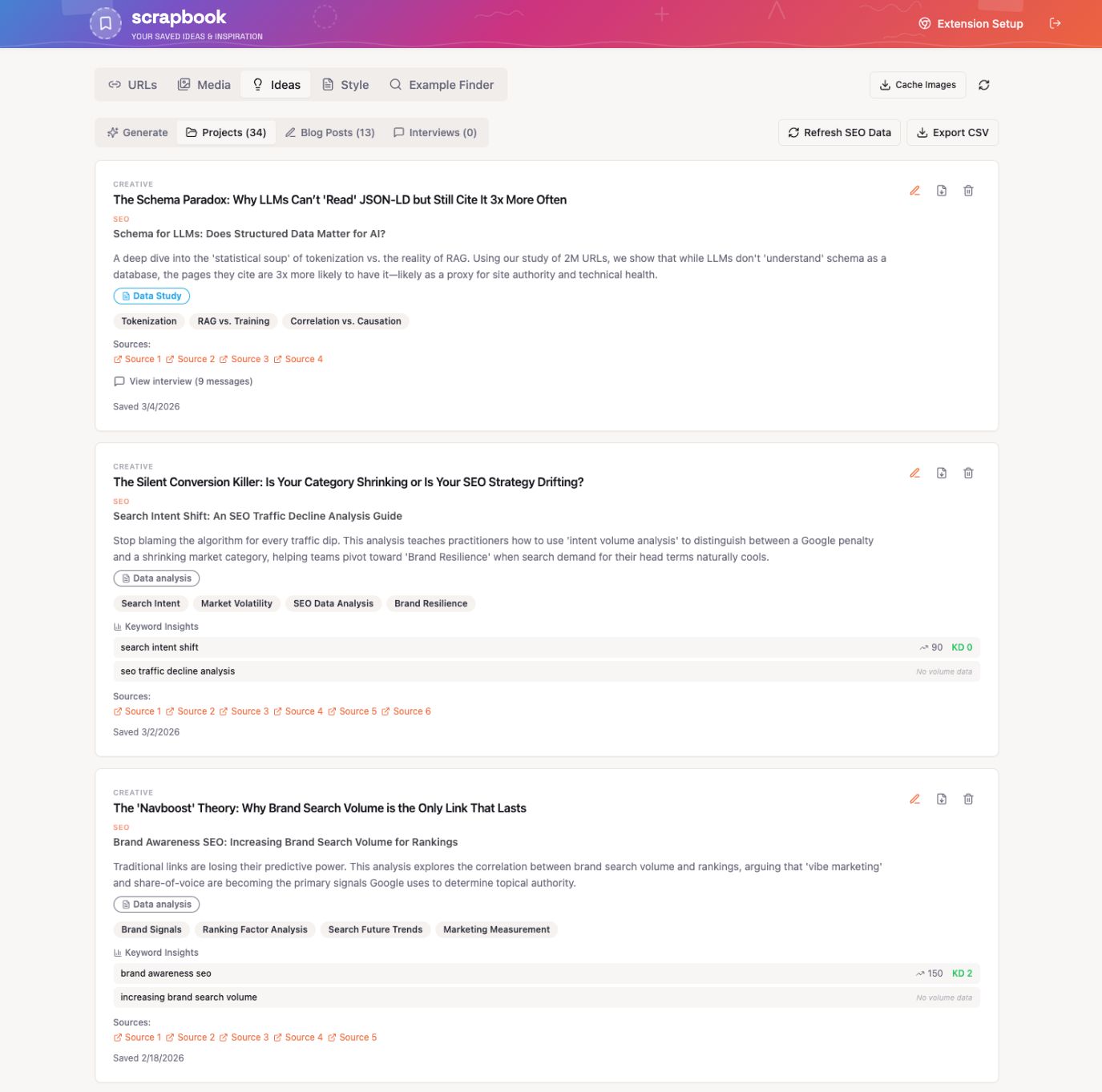

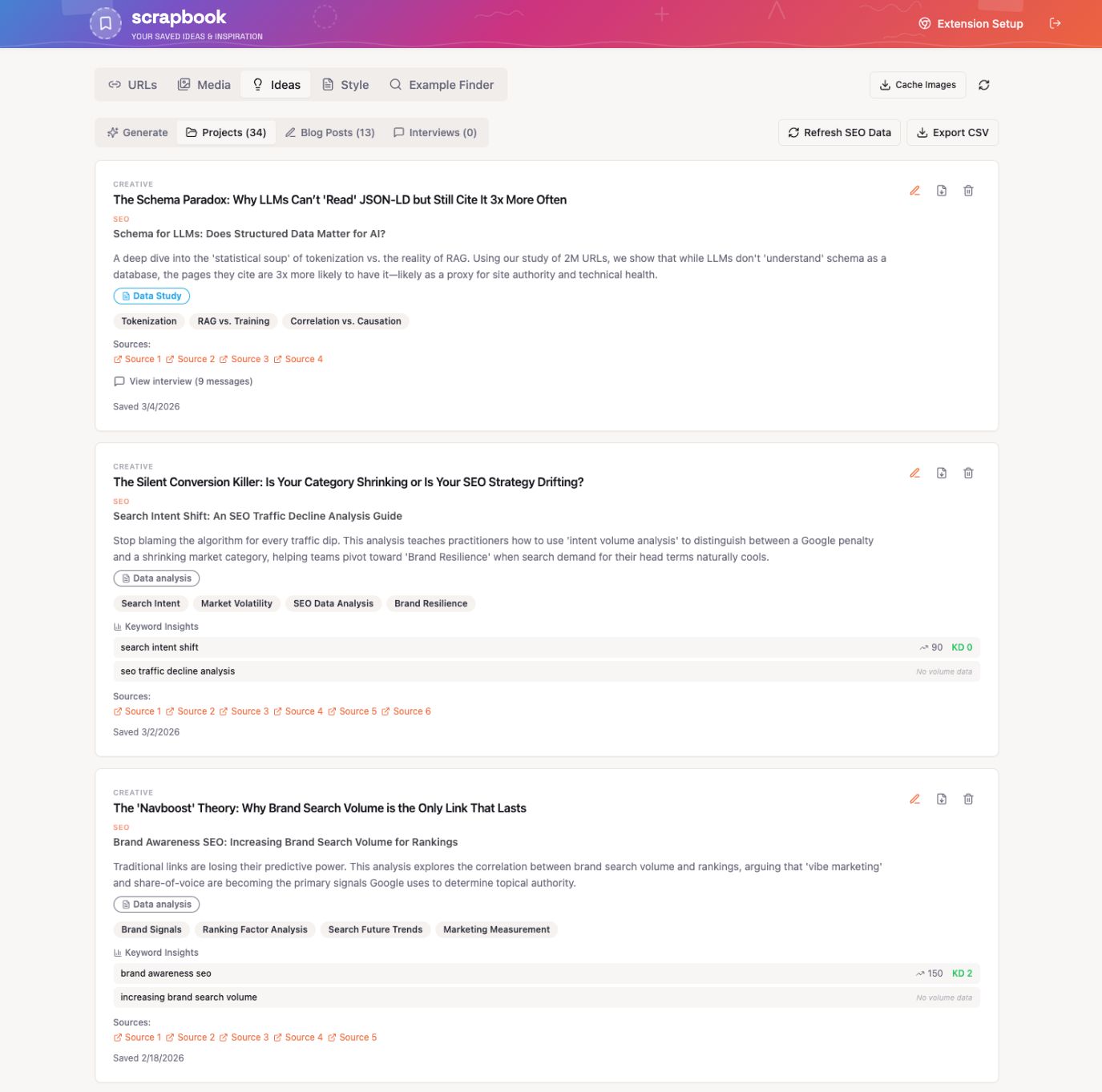

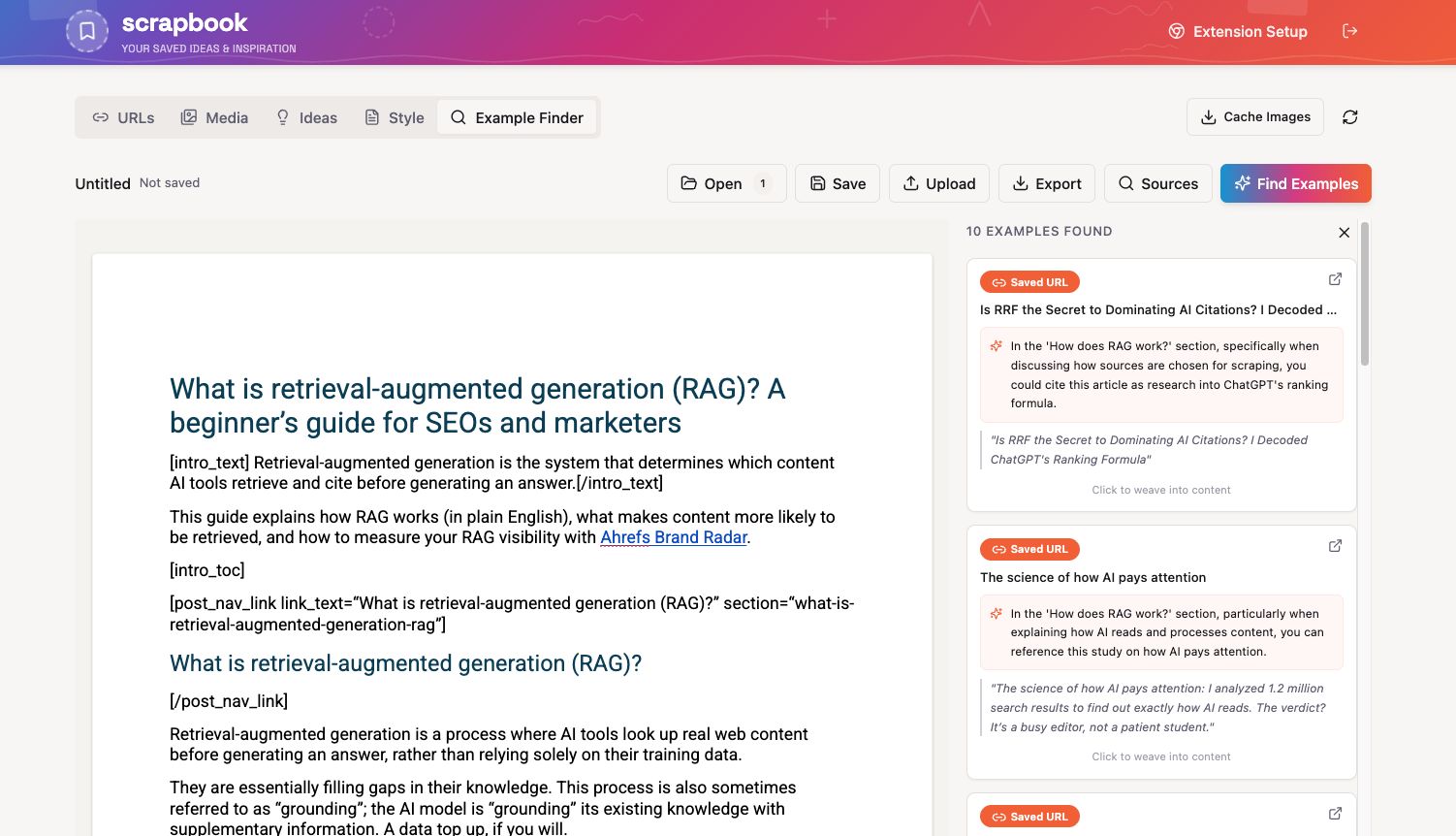

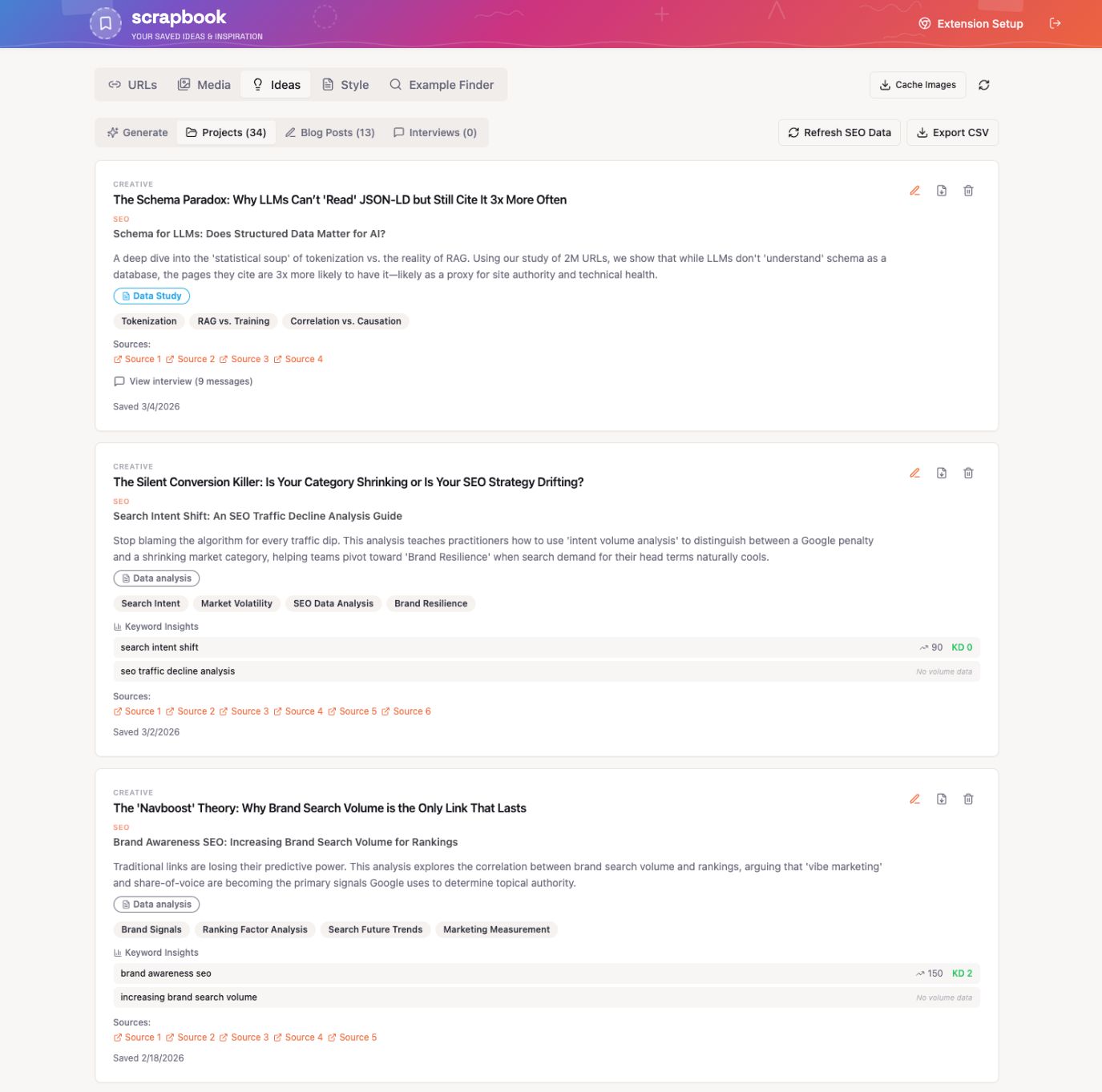

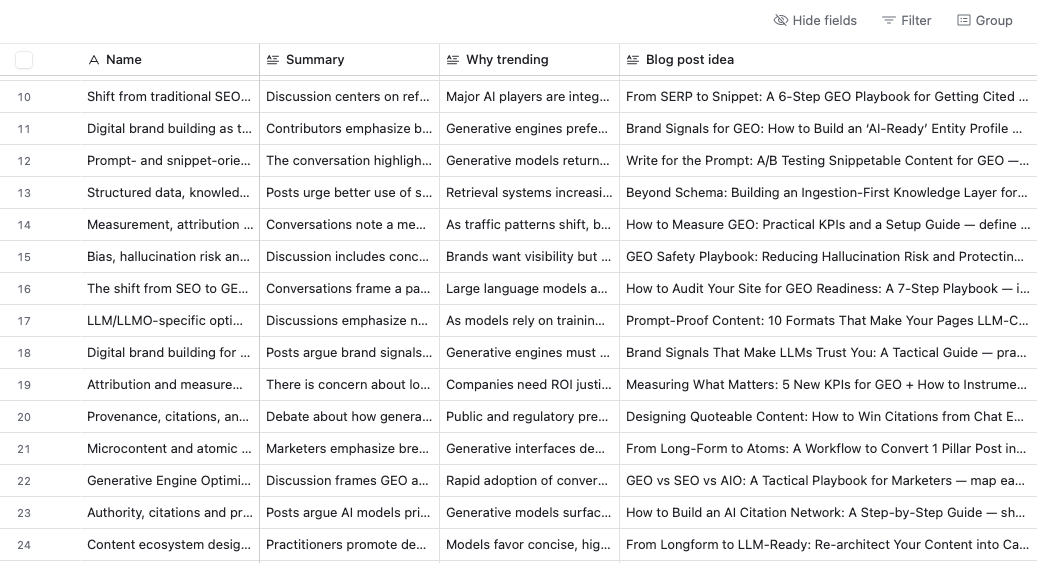

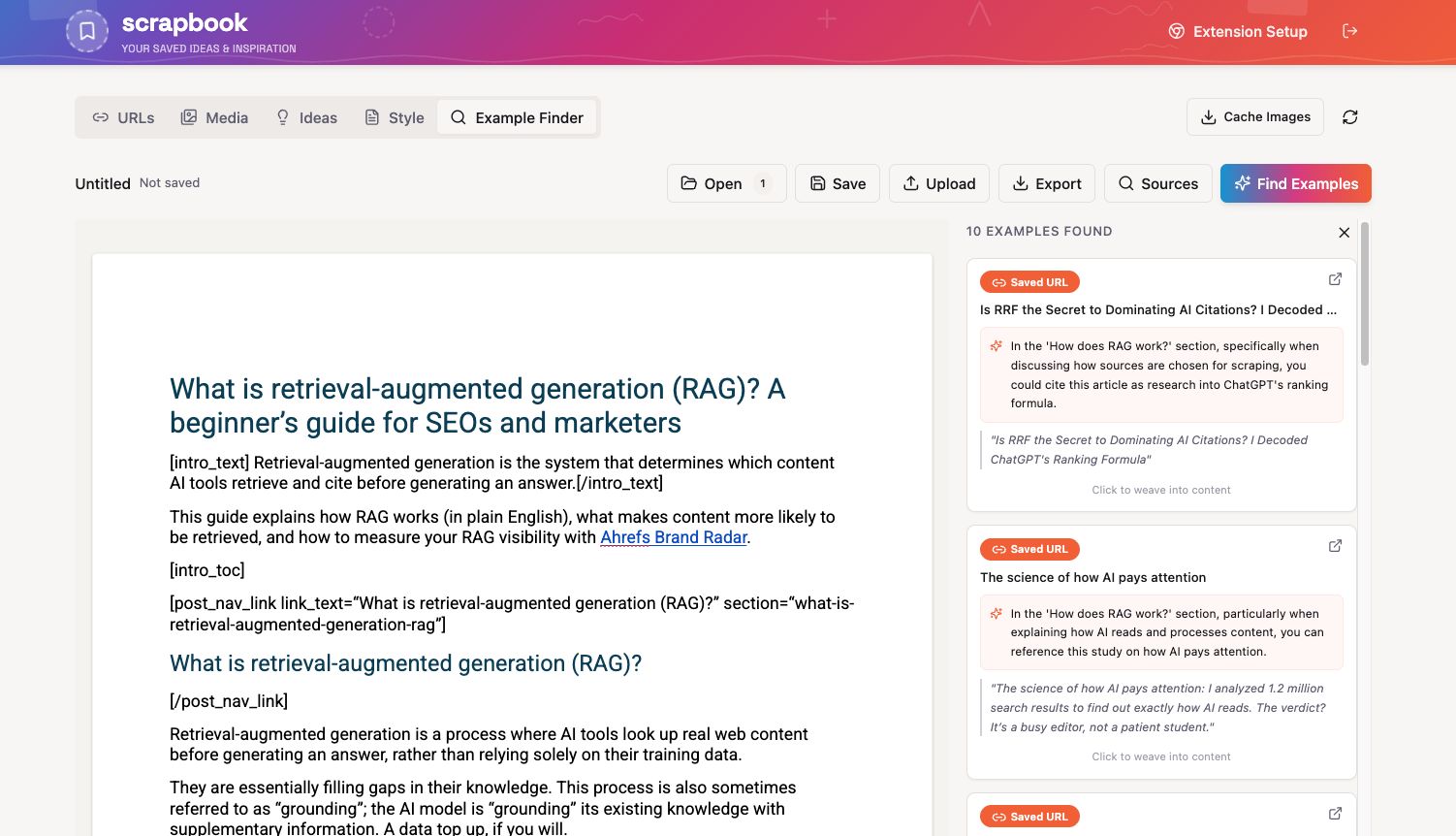

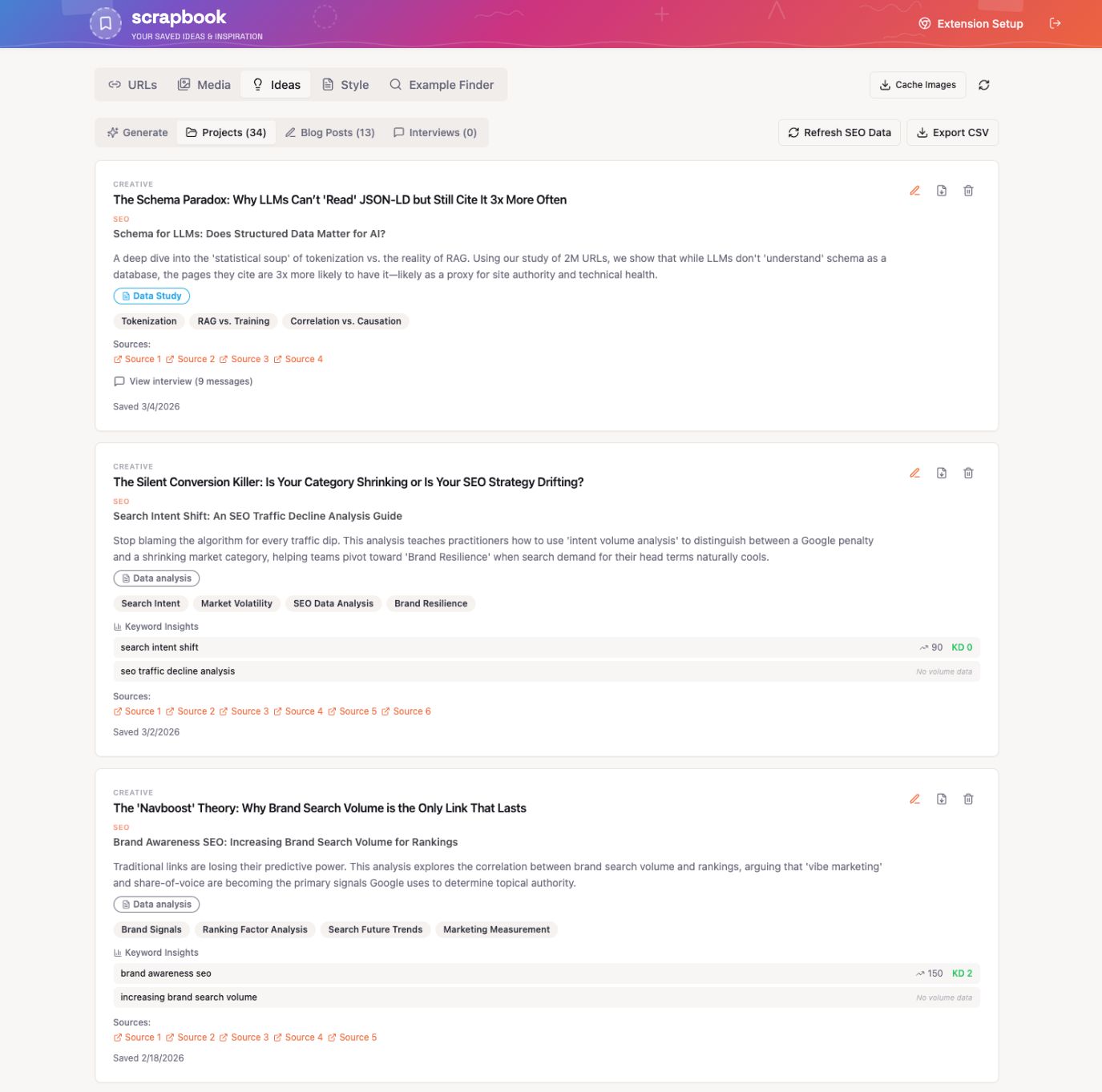

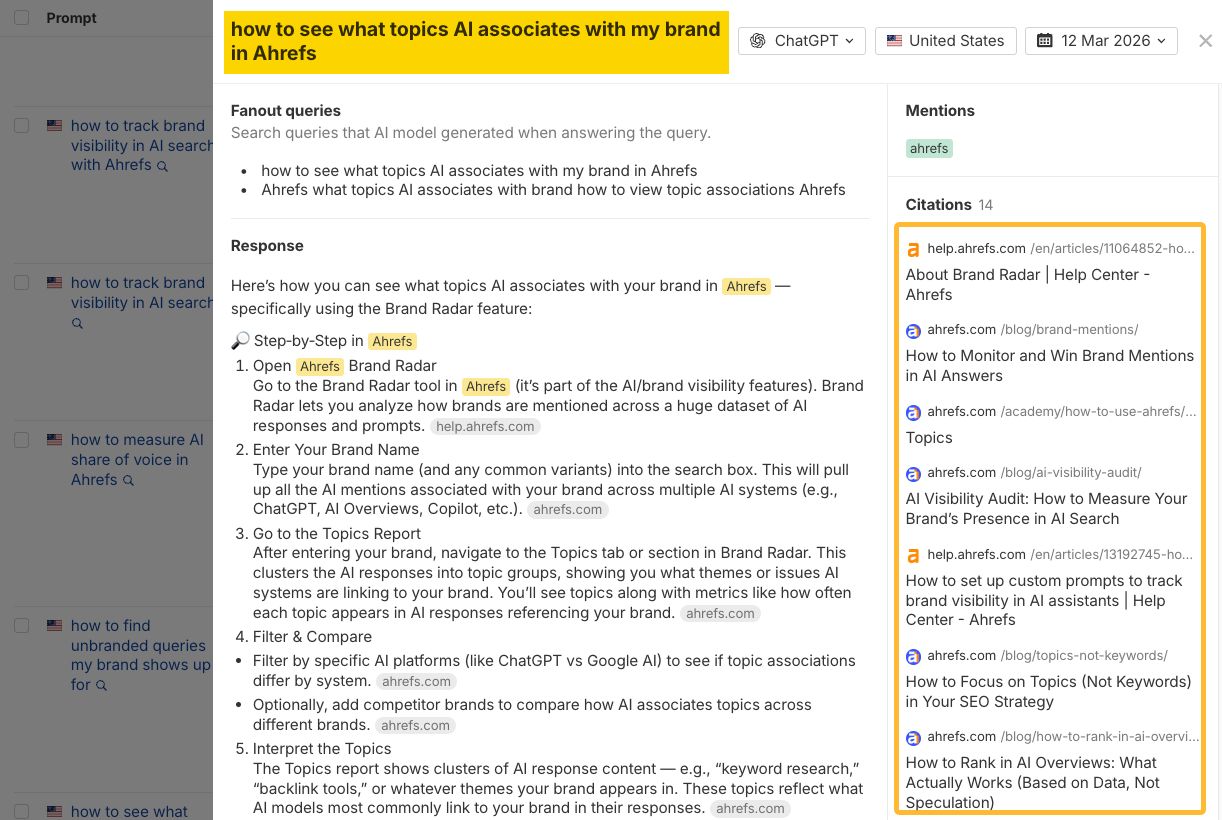

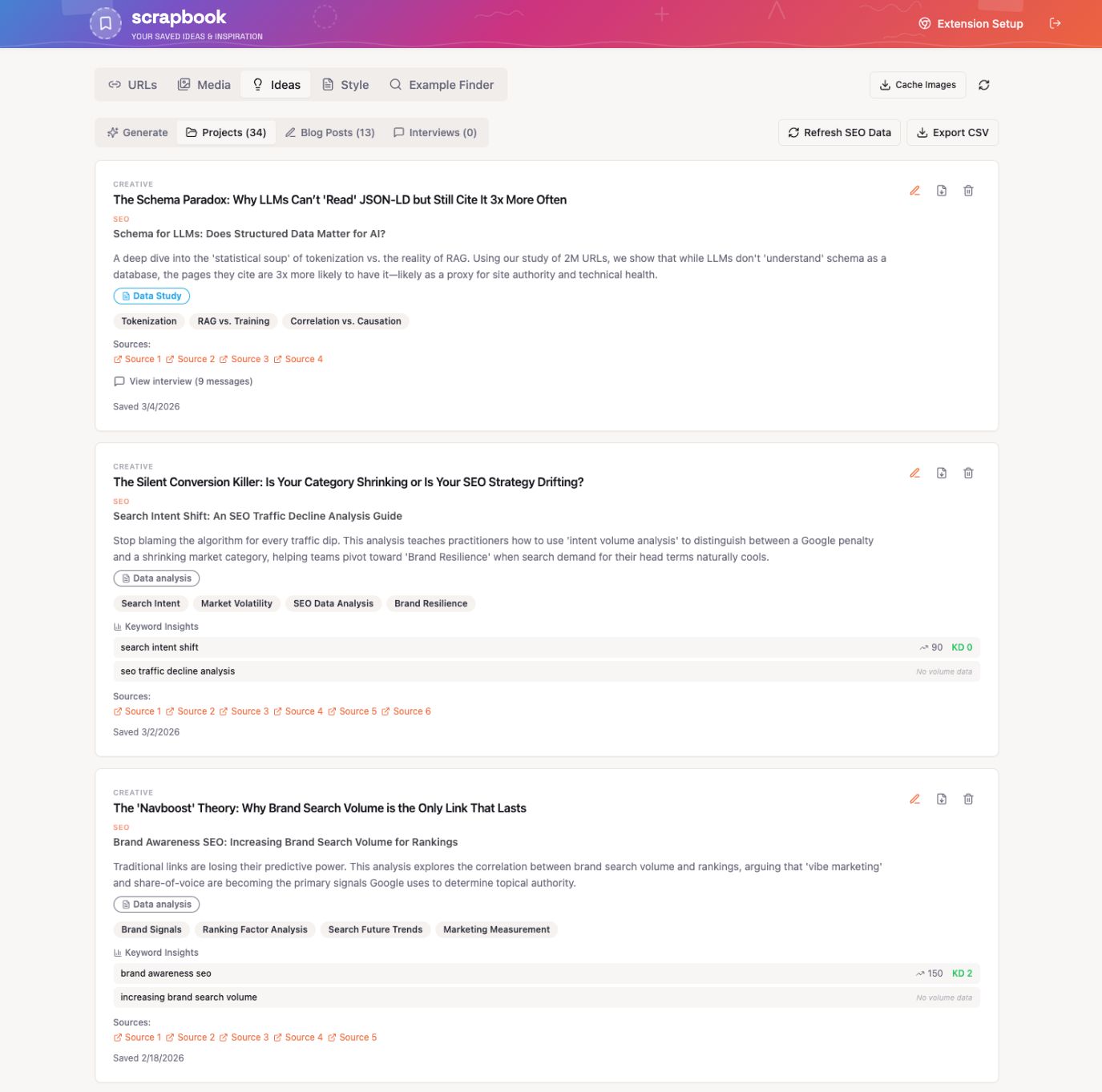

AI’s answer to a custom prompt, including citations. For shareable content, build an idea pipeline. Start a scrapbook. Store ideas, facts, quotes, social posts, newsletter excerpts, and anything you might want to give AI access to later.

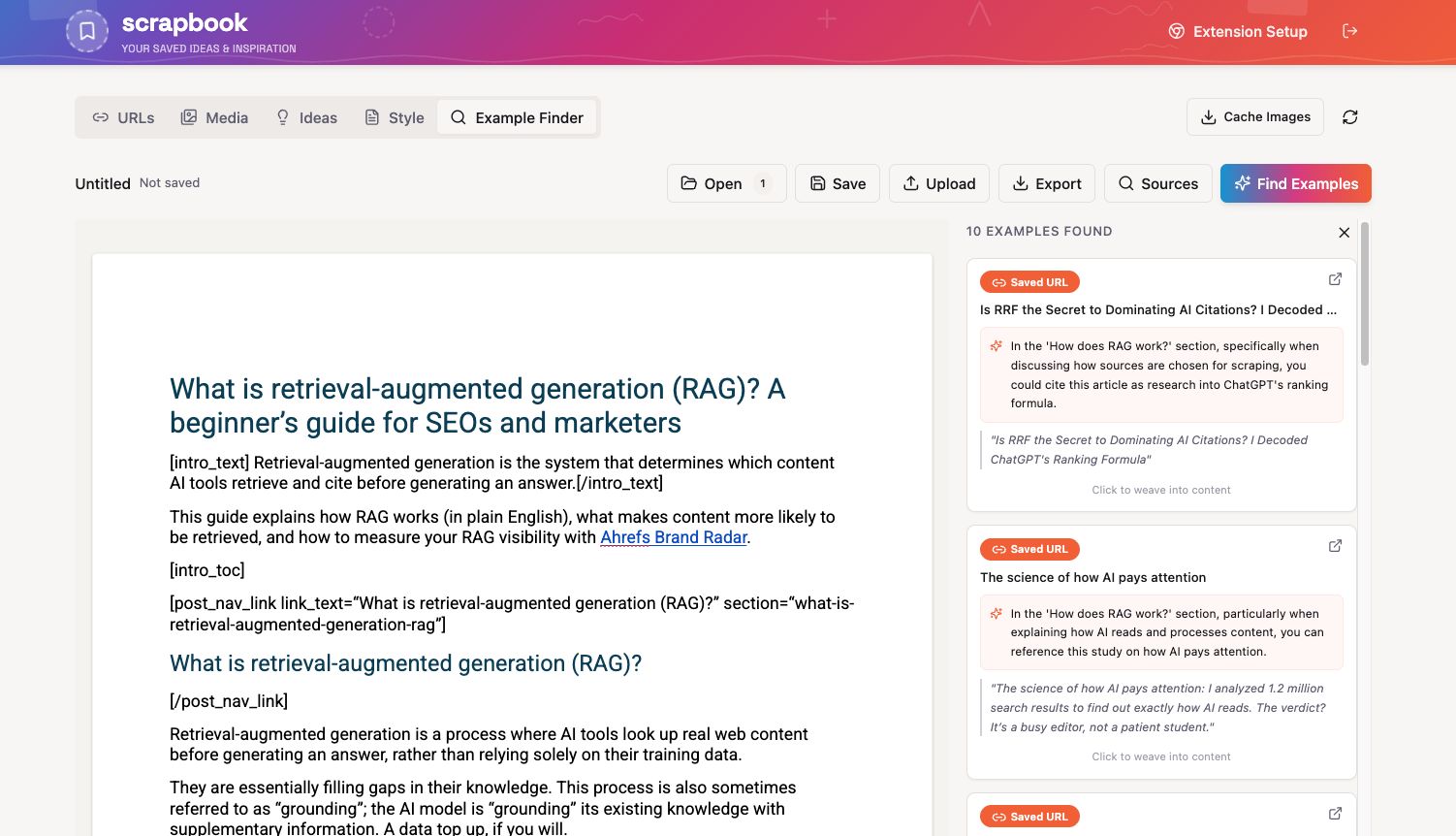

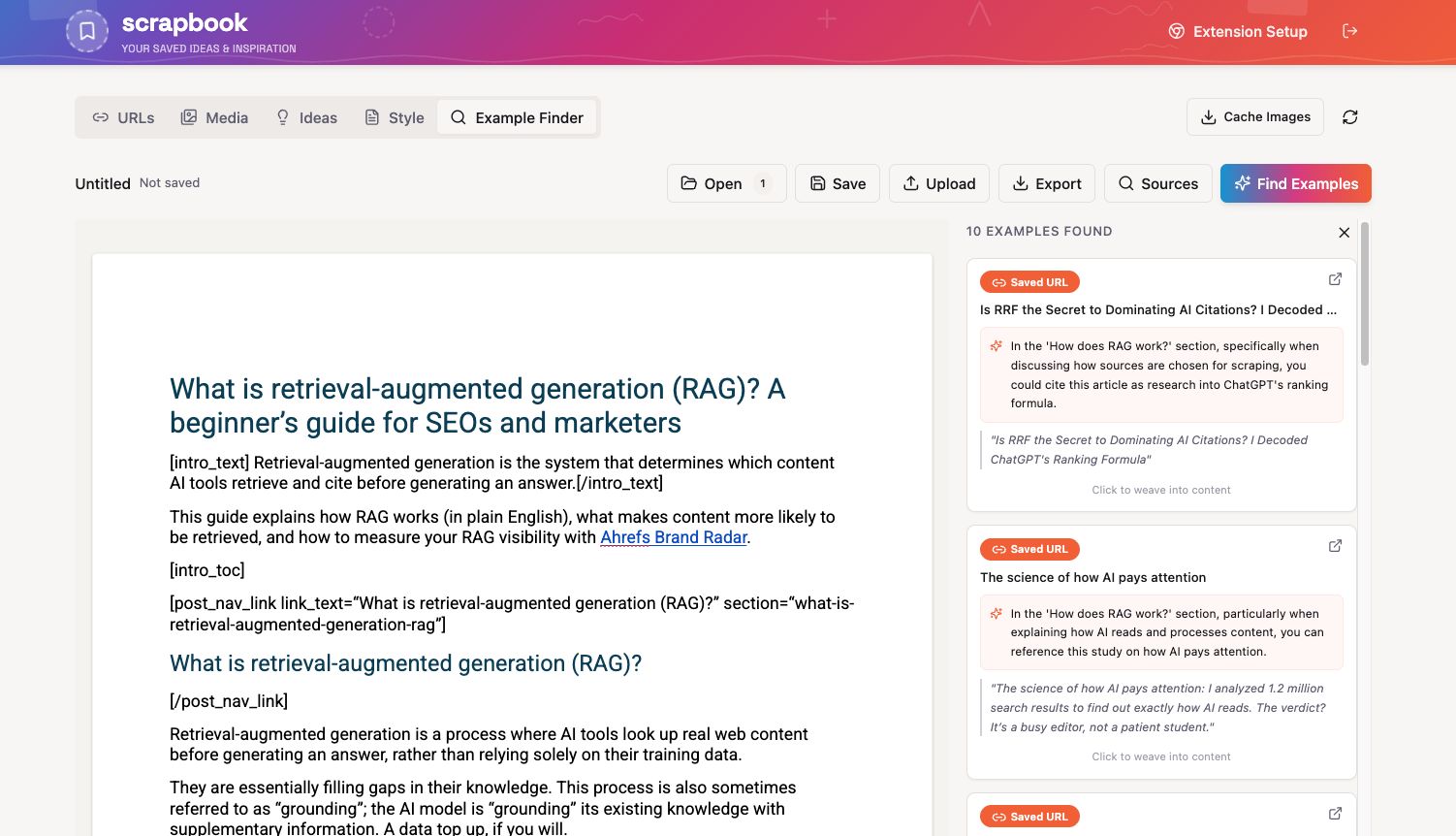

You can use Notion, Evernote, whatever suits you. But consider vibecoding a custom tool, like my colleague Louise. That way, you can bake in features like an “example finder” that surfaces relevant support for claims in your writing, or just generates content ideas from your material on the spot.

Example-finding feature.

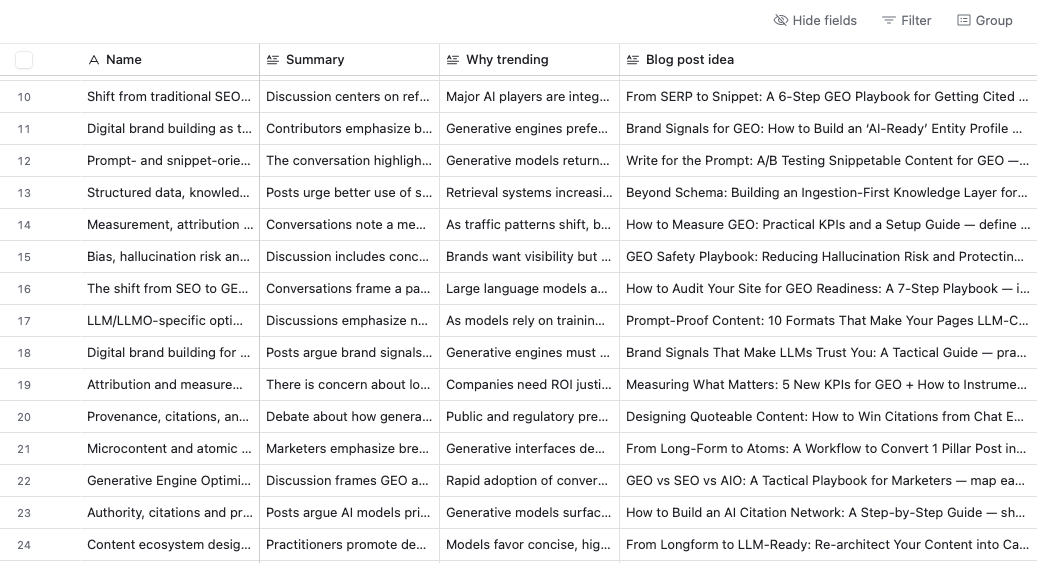

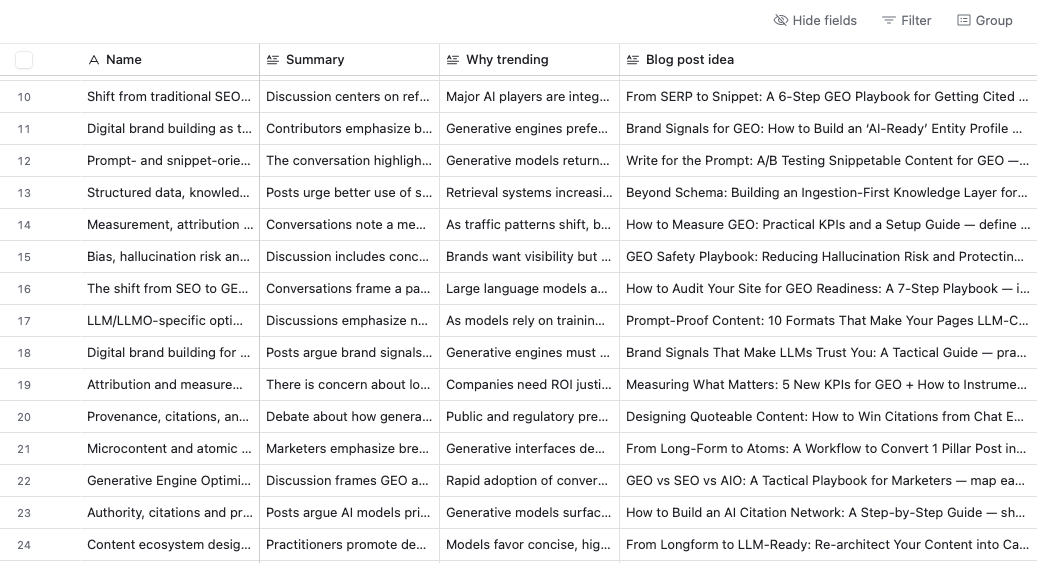

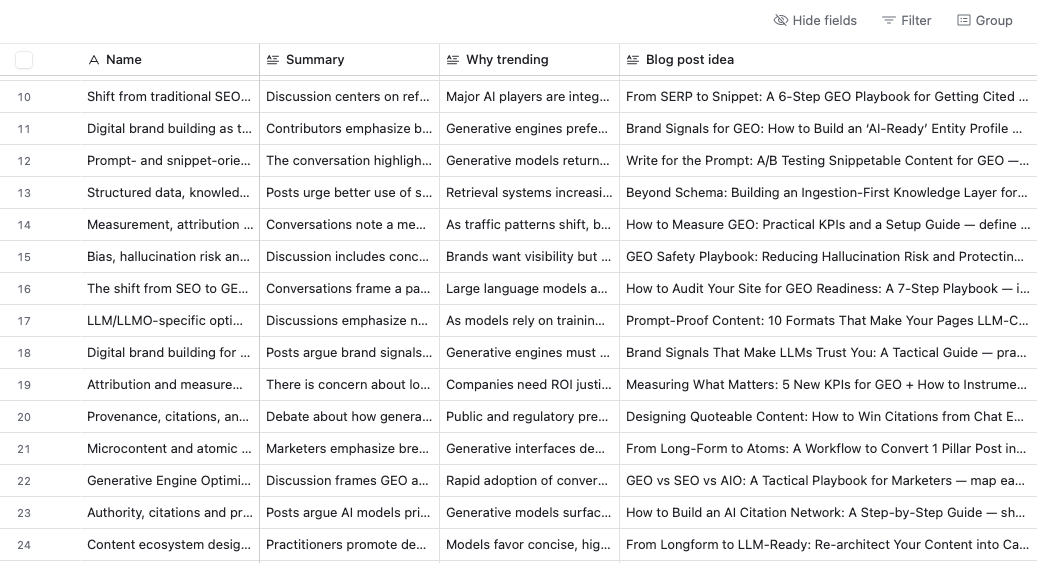

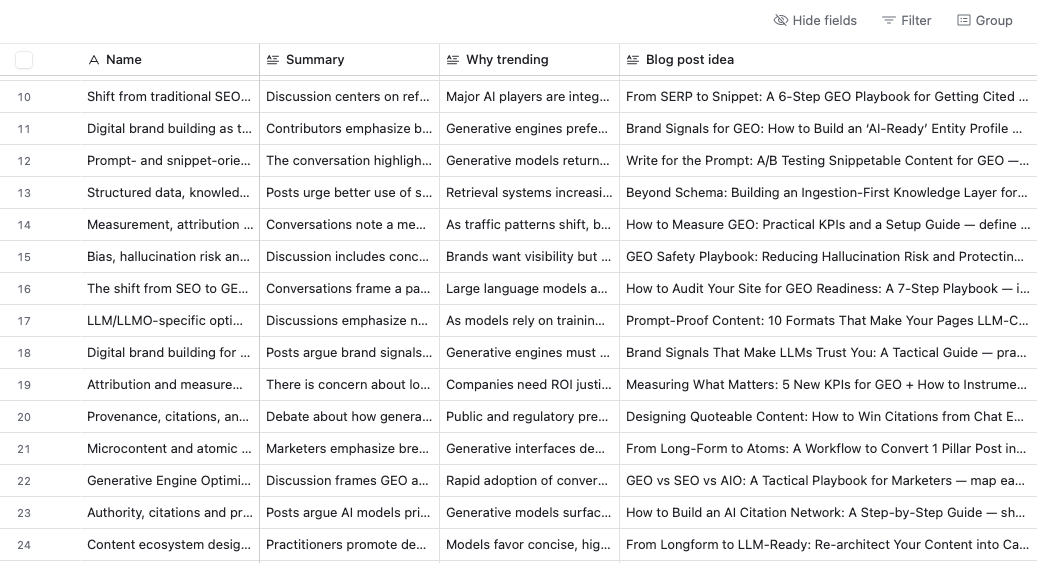

Idea-generating feature. Another idea: set up an AI agent that scours the web for content ideas on a schedule. I built one with Relay that goes through LinkedIn and Reddit conversations (fair use) every 7 days. It helped me stay on top of all the new content coming out faster than ever and stay sane.

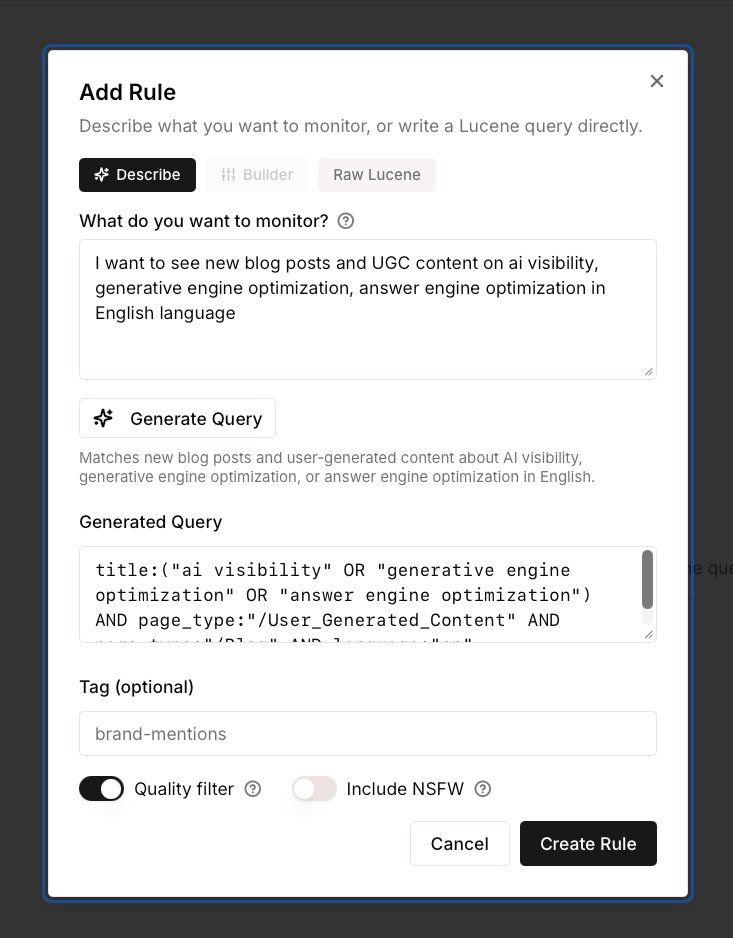

If you want to keep a constant pulse on new content in your space, try our new tool, Firehose. It streams the web in real time on any topic you define, with advanced filtering. You describe what you’re looking for in natural language, and it’s ready to go. You can also connect it to your AI agents through the API.

Final thoughts

If you take one thing from this article, it’s: invest in what you feed the AI, not in the tool that generates from it. Build your source-of-truth files before you write a single word. Keep your judgment in the loop—use conversations, not buttons. Spend on inputs, not wrappers. Use coding-capable AI to maintain your content at scale.

The people producing the best AI-assisted content in a year’s time will be working from better information and better judgment. I suspect some teams are already there. I think we’ll all be more knowledge curators than writers in the traditional sense.

The full breakdown of the 40-article experiment I mentioned in the intro is coming in a separate piece.

Thanks for reading! If you have any questions or comments, let me know on LinkedIn.

Similar Posts

Tangem Multi-Account Support is FINALLY HERE (everything you need to know)

Buy a Tangem Cold Wallet & Get 20% Off + Free Bitcoin: https://tangem.com/pricing/?promocode=CYBERSCRILLA&promocode=NYEXTRA26 Tangem User Guide:…

Why Choose a Japan Dedicated Server? Key Performance Benefits Explained

In today’s digital landscape, selecting the optimal server location isn’t just about hardware specs—it’s a strategic…

Anonymized Queries Make Up Nearly Half of Google Search Console Traffic

In April 2025, anonymized queries made up 46.77% of website traffic. This is data from before…

ODATA Raises $1.02B Green Financing for Sustainable Data Centers

ODATA, a subsidiary of Aligned Data Centers, has secured US $1.02 billion in green financing dedicated…

A Complete Guide to Build Web Crawler with Python

Building a web crawler can feel overwhelming, especially when you encounter issues like getting blocked by…

How to Choose the Right Colocation Data Center for Long-Term Growth

For many organizations, co-locating IT equipment in a third-party data center offers a way to reduce capital expenditure,…

Tip: For the most important steps, I ran my prompts twice, or ran the same check through a second AI to catch anything the first one missed.

MCP integrations like Ahrefs’ let you pipe real data directly into these workflows—we’re experimenting with a full Claude Code pipeline where SEO research happens automatically. If your tool doesn’t support MCP yet, pull the data manually. Even screenshots work, as long as you give the AI specific data to work on.

Ahrefs’ Brand Radar), then had Claude go through these pages to extract the structure and use that as an outline template for content generation. Then I asked it to weave in my own ideas. Research, structure, writing—all in one conversation, controlling every stage.

But maybe I’m wrong. Maybe a writing tool with everything on board is more your style. I’ll leave it to you to decide what makes more sense economically. I don’t want to tell you what to do with your money, but I know that for my needs, I’m never going back to AI writing tools.

There’s also something a bit self-defeating about the AI tool ecosystem. Every time an LLM provider releases a better model, many of the tools built on top of it lose part of their reason to exist.

My solution: invest more in what you feed the AI

Redirect time and money toward:

- Research tools that go deep. Rich keyword data, search intent analysis, competitive gaps, AI-preferred content formats, etc. Writing tools bolt on a surface-level version of this. Dedicated platforms have years of infrastructure behind them (here’s ours).

- Your editorial system. Prompt libraries, fact-checking workflows, style enforcement, Claude or Codex skills. The stuff that keeps your judgment in the loop at every stage. Same principle as the reference files: invest in the inputs.

This setup also makes it easier to adapt when models change or your content needs shift. It’ll click after the next section.

My AI misinformation experiment is an example: it ranked for nothing, but drove 24k visits and more social traction than I could count.

My solution: choose flexibility over convenience

Both content tracks need different approaches, and AI chatbots are the only tools flexible enough to handle both. So what you need is a process for creating documentation that you can easily share with AI.

For searchable content, audit your product documentation and help content. If an AI model can’t answer a basic question about your product using your own content, that’s a gap someone else will fill, accidentally or deliberately.

You can chat with the most popular AI assistants to spot holes, or set up tracking in a tool like Ahrefs Brand Radar to do it at scale.

Adding custom prompts in Brand Radar.

AI’s answer to a custom prompt, including citations. For shareable content, build an idea pipeline. Start a scrapbook. Store ideas, facts, quotes, social posts, newsletter excerpts, and anything you might want to give AI access to later.

You can use Notion, Evernote, whatever suits you. But consider vibecoding a custom tool, like my colleague Louise. That way, you can bake in features like an “example finder” that surfaces relevant support for claims in your writing, or just generates content ideas from your material on the spot.

Example-finding feature.

Idea-generating feature. Another idea: set up an AI agent that scours the web for content ideas on a schedule. I built one with Relay that goes through LinkedIn and Reddit conversations (fair use) every 7 days. It helped me stay on top of all the new content coming out faster than ever and stay sane.

If you want to keep a constant pulse on new content in your space, try our new tool, Firehose. It streams the web in real time on any topic you define, with advanced filtering. You describe what you’re looking for in natural language, and it’s ready to go. You can also connect it to your AI agents through the API.

Final thoughts

If you take one thing from this article, it’s: invest in what you feed the AI, not in the tool that generates from it. Build your source-of-truth files before you write a single word. Keep your judgment in the loop—use conversations, not buttons. Spend on inputs, not wrappers. Use coding-capable AI to maintain your content at scale.

The people producing the best AI-assisted content in a year’s time will be working from better information and better judgment. I suspect some teams are already there. I think we’ll all be more knowledge curators than writers in the traditional sense.

The full breakdown of the 40-article experiment I mentioned in the intro is coming in a separate piece.

Thanks for reading! If you have any questions or comments, let me know on LinkedIn.

Similar Posts

Tangem Multi-Account Support is FINALLY HERE (everything you need to know)

Buy a Tangem Cold Wallet & Get 20% Off + Free Bitcoin: https://tangem.com/pricing/?promocode=CYBERSCRILLA&promocode=NYEXTRA26 Tangem User Guide:…

Why Choose a Japan Dedicated Server? Key Performance Benefits Explained

In today’s digital landscape, selecting the optimal server location isn’t just about hardware specs—it’s a strategic…

Anonymized Queries Make Up Nearly Half of Google Search Console Traffic

In April 2025, anonymized queries made up 46.77% of website traffic. This is data from before…

ODATA Raises $1.02B Green Financing for Sustainable Data Centers

ODATA, a subsidiary of Aligned Data Centers, has secured US $1.02 billion in green financing dedicated…

A Complete Guide to Build Web Crawler with Python

Building a web crawler can feel overwhelming, especially when you encounter issues like getting blocked by…

How to Choose the Right Colocation Data Center for Long-Term Growth

For many organizations, co-locating IT equipment in a third-party data center offers a way to reduce capital expenditure,…

Ahrefs’ Brand Radar), then had Claude go through these pages to extract the structure and use that as an outline template for content generation. Then I asked it to weave in my own ideas. Research, structure, writing—all in one conversation, controlling every stage.

But maybe I’m wrong. Maybe a writing tool with everything on board is more your style. I’ll leave it to you to decide what makes more sense economically. I don’t want to tell you what to do with your money, but I know that for my needs, I’m never going back to AI writing tools.

There’s also something a bit self-defeating about the AI tool ecosystem. Every time an LLM provider releases a better model, many of the tools built on top of it lose part of their reason to exist.

My solution: invest more in what you feed the AI

Redirect time and money toward:

- Research tools that go deep. Rich keyword data, search intent analysis, competitive gaps, AI-preferred content formats, etc. Writing tools bolt on a surface-level version of this. Dedicated platforms have years of infrastructure behind them (here’s ours).

- Your editorial system. Prompt libraries, fact-checking workflows, style enforcement, Claude or Codex skills. The stuff that keeps your judgment in the loop at every stage. Same principle as the reference files: invest in the inputs.

This setup also makes it easier to adapt when models change or your content needs shift. It’ll click after the next section.

My AI misinformation experiment is an example: it ranked for nothing, but drove 24k visits and more social traction than I could count.

My solution: choose flexibility over convenience

Both content tracks need different approaches, and AI chatbots are the only tools flexible enough to handle both. So what you need is a process for creating documentation that you can easily share with AI.

For searchable content, audit your product documentation and help content. If an AI model can’t answer a basic question about your product using your own content, that’s a gap someone else will fill, accidentally or deliberately.

You can chat with the most popular AI assistants to spot holes, or set up tracking in a tool like Ahrefs Brand Radar to do it at scale.

Adding custom prompts in Brand Radar.

AI’s answer to a custom prompt, including citations. For shareable content, build an idea pipeline. Start a scrapbook. Store ideas, facts, quotes, social posts, newsletter excerpts, and anything you might want to give AI access to later.

You can use Notion, Evernote, whatever suits you. But consider vibecoding a custom tool, like my colleague Louise. That way, you can bake in features like an “example finder” that surfaces relevant support for claims in your writing, or just generates content ideas from your material on the spot.

Example-finding feature.

Idea-generating feature. Another idea: set up an AI agent that scours the web for content ideas on a schedule. I built one with Relay that goes through LinkedIn and Reddit conversations (fair use) every 7 days. It helped me stay on top of all the new content coming out faster than ever and stay sane.

If you want to keep a constant pulse on new content in your space, try our new tool, Firehose. It streams the web in real time on any topic you define, with advanced filtering. You describe what you’re looking for in natural language, and it’s ready to go. You can also connect it to your AI agents through the API.

Final thoughts

If you take one thing from this article, it’s: invest in what you feed the AI, not in the tool that generates from it. Build your source-of-truth files before you write a single word. Keep your judgment in the loop—use conversations, not buttons. Spend on inputs, not wrappers. Use coding-capable AI to maintain your content at scale.

The people producing the best AI-assisted content in a year’s time will be working from better information and better judgment. I suspect some teams are already there. I think we’ll all be more knowledge curators than writers in the traditional sense.

The full breakdown of the 40-article experiment I mentioned in the intro is coming in a separate piece.

Thanks for reading! If you have any questions or comments, let me know on LinkedIn.

Similar Posts

Tangem Multi-Account Support is FINALLY HERE (everything you need to know)

Buy a Tangem Cold Wallet & Get 20% Off + Free Bitcoin: https://tangem.com/pricing/?promocode=CYBERSCRILLA&promocode=NYEXTRA26 Tangem User Guide:…

Why Choose a Japan Dedicated Server? Key Performance Benefits Explained

In today’s digital landscape, selecting the optimal server location isn’t just about hardware specs—it’s a strategic…

Anonymized Queries Make Up Nearly Half of Google Search Console Traffic

In April 2025, anonymized queries made up 46.77% of website traffic. This is data from before…

ODATA Raises $1.02B Green Financing for Sustainable Data Centers

ODATA, a subsidiary of Aligned Data Centers, has secured US $1.02 billion in green financing dedicated…

A Complete Guide to Build Web Crawler with Python

Building a web crawler can feel overwhelming, especially when you encounter issues like getting blocked by…

How to Choose the Right Colocation Data Center for Long-Term Growth

For many organizations, co-locating IT equipment in a third-party data center offers a way to reduce capital expenditure,…

But maybe I’m wrong. Maybe a writing tool with everything on board is more your style. I’ll leave it to you to decide what makes more sense economically. I don’t want to tell you what to do with your money, but I know that for my needs, I’m never going back to AI writing tools.

There’s also something a bit self-defeating about the AI tool ecosystem. Every time an LLM provider releases a better model, many of the tools built on top of it lose part of their reason to exist.

My solution: invest more in what you feed the AI

Redirect time and money toward:

- Research tools that go deep. Rich keyword data, search intent analysis, competitive gaps, AI-preferred content formats, etc. Writing tools bolt on a surface-level version of this. Dedicated platforms have years of infrastructure behind them (here’s ours).

- Your editorial system. Prompt libraries, fact-checking workflows, style enforcement, Claude or Codex skills. The stuff that keeps your judgment in the loop at every stage. Same principle as the reference files: invest in the inputs.

This setup also makes it easier to adapt when models change or your content needs shift. It’ll click after the next section.

My AI misinformation experiment is an example: it ranked for nothing, but drove 24k visits and more social traction than I could count.

My solution: choose flexibility over convenience

Both content tracks need different approaches, and AI chatbots are the only tools flexible enough to handle both. So what you need is a process for creating documentation that you can easily share with AI.

For searchable content, audit your product documentation and help content. If an AI model can’t answer a basic question about your product using your own content, that’s a gap someone else will fill, accidentally or deliberately.

You can chat with the most popular AI assistants to spot holes, or set up tracking in a tool like Ahrefs Brand Radar to do it at scale.

Adding custom prompts in Brand Radar.

AI’s answer to a custom prompt, including citations. For shareable content, build an idea pipeline. Start a scrapbook. Store ideas, facts, quotes, social posts, newsletter excerpts, and anything you might want to give AI access to later.

You can use Notion, Evernote, whatever suits you. But consider vibecoding a custom tool, like my colleague Louise. That way, you can bake in features like an “example finder” that surfaces relevant support for claims in your writing, or just generates content ideas from your material on the spot.

Example-finding feature.

Idea-generating feature. Another idea: set up an AI agent that scours the web for content ideas on a schedule. I built one with Relay that goes through LinkedIn and Reddit conversations (fair use) every 7 days. It helped me stay on top of all the new content coming out faster than ever and stay sane.

If you want to keep a constant pulse on new content in your space, try our new tool, Firehose. It streams the web in real time on any topic you define, with advanced filtering. You describe what you’re looking for in natural language, and it’s ready to go. You can also connect it to your AI agents through the API.

Final thoughts

If you take one thing from this article, it’s: invest in what you feed the AI, not in the tool that generates from it. Build your source-of-truth files before you write a single word. Keep your judgment in the loop—use conversations, not buttons. Spend on inputs, not wrappers. Use coding-capable AI to maintain your content at scale.

The people producing the best AI-assisted content in a year’s time will be working from better information and better judgment. I suspect some teams are already there. I think we’ll all be more knowledge curators than writers in the traditional sense.

The full breakdown of the 40-article experiment I mentioned in the intro is coming in a separate piece.

Thanks for reading! If you have any questions or comments, let me know on LinkedIn.

My solution: choose flexibility over convenience

Both content tracks need different approaches, and AI chatbots are the only tools flexible enough to handle both. So what you need is a process for creating documentation that you can easily share with AI.

For searchable content, audit your product documentation and help content. If an AI model can’t answer a basic question about your product using your own content, that’s a gap someone else will fill, accidentally or deliberately.

You can chat with the most popular AI assistants to spot holes, or set up tracking in a tool like Ahrefs Brand Radar to do it at scale.

For shareable content, build an idea pipeline. Start a scrapbook. Store ideas, facts, quotes, social posts, newsletter excerpts, and anything you might want to give AI access to later.

You can use Notion, Evernote, whatever suits you. But consider vibecoding a custom tool, like my colleague Louise. That way, you can bake in features like an “example finder” that surfaces relevant support for claims in your writing, or just generates content ideas from your material on the spot.

Another idea: set up an AI agent that scours the web for content ideas on a schedule. I built one with Relay that goes through LinkedIn and Reddit conversations (fair use) every 7 days. It helped me stay on top of all the new content coming out faster than ever and stay sane.

If you want to keep a constant pulse on new content in your space, try our new tool, Firehose. It streams the web in real time on any topic you define, with advanced filtering. You describe what you’re looking for in natural language, and it’s ready to go. You can also connect it to your AI agents through the API.

Final thoughts

If you take one thing from this article, it’s: invest in what you feed the AI, not in the tool that generates from it. Build your source-of-truth files before you write a single word. Keep your judgment in the loop—use conversations, not buttons. Spend on inputs, not wrappers. Use coding-capable AI to maintain your content at scale.

The people producing the best AI-assisted content in a year’s time will be working from better information and better judgment. I suspect some teams are already there. I think we’ll all be more knowledge curators than writers in the traditional sense.

The full breakdown of the 40-article experiment I mentioned in the intro is coming in a separate piece.

Thanks for reading! If you have any questions or comments, let me know on LinkedIn.

Similar Posts

Tangem Multi-Account Support is FINALLY HERE (everything you need to know)

Buy a Tangem Cold Wallet & Get 20% Off + Free Bitcoin: https://tangem.com/pricing/?promocode=CYBERSCRILLA&promocode=NYEXTRA26 Tangem User Guide:…

Why Choose a Japan Dedicated Server? Key Performance Benefits Explained

In today’s digital landscape, selecting the optimal server location isn’t just about hardware specs—it’s a strategic…

Anonymized Queries Make Up Nearly Half of Google Search Console Traffic

In April 2025, anonymized queries made up 46.77% of website traffic. This is data from before…

ODATA Raises $1.02B Green Financing for Sustainable Data Centers

ODATA, a subsidiary of Aligned Data Centers, has secured US $1.02 billion in green financing dedicated…

A Complete Guide to Build Web Crawler with Python

Building a web crawler can feel overwhelming, especially when you encounter issues like getting blocked by…

How to Choose the Right Colocation Data Center for Long-Term Growth

For many organizations, co-locating IT equipment in a third-party data center offers a way to reduce capital expenditure,…